搜索到

154

篇与

的结果

-

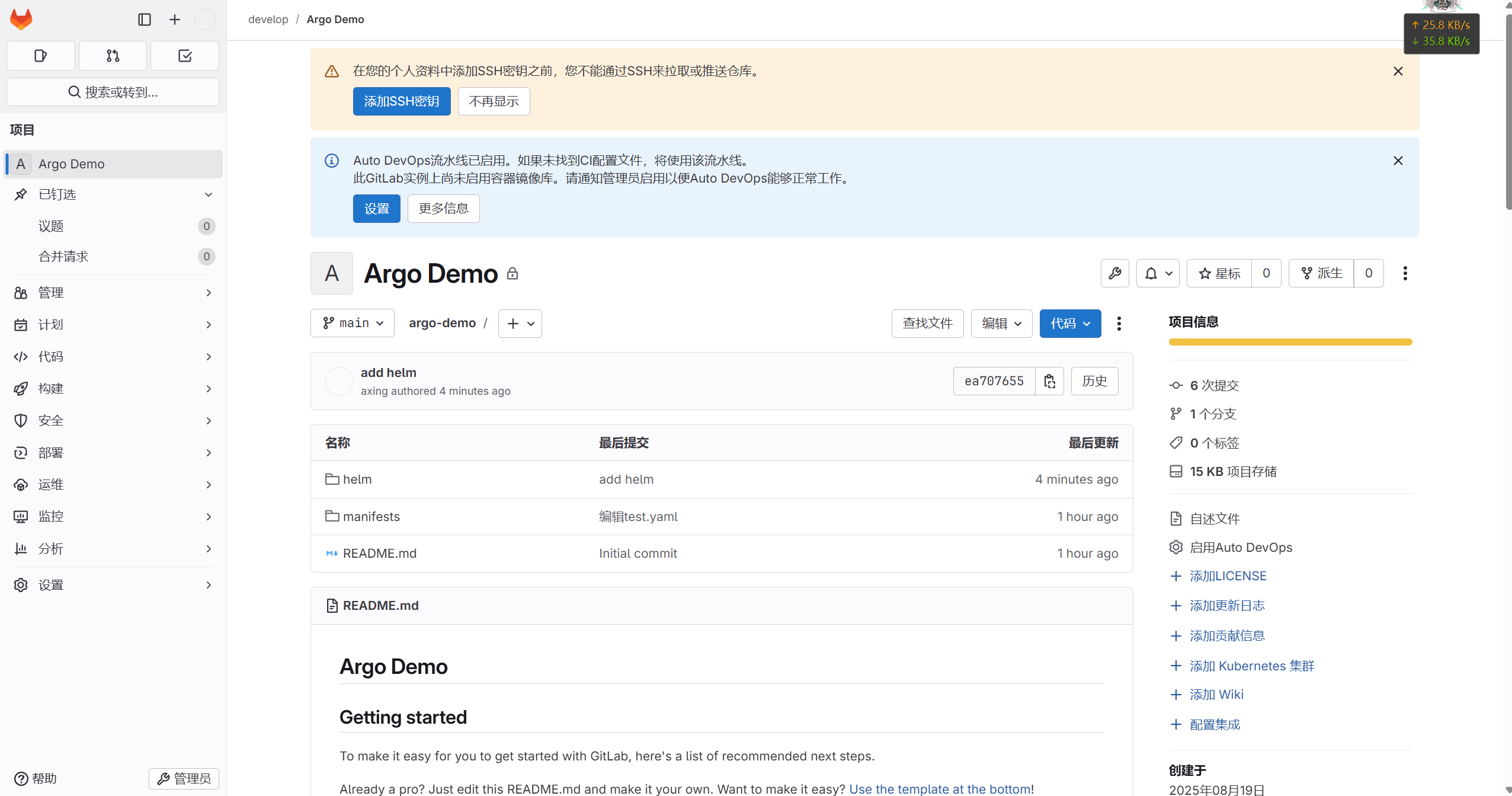

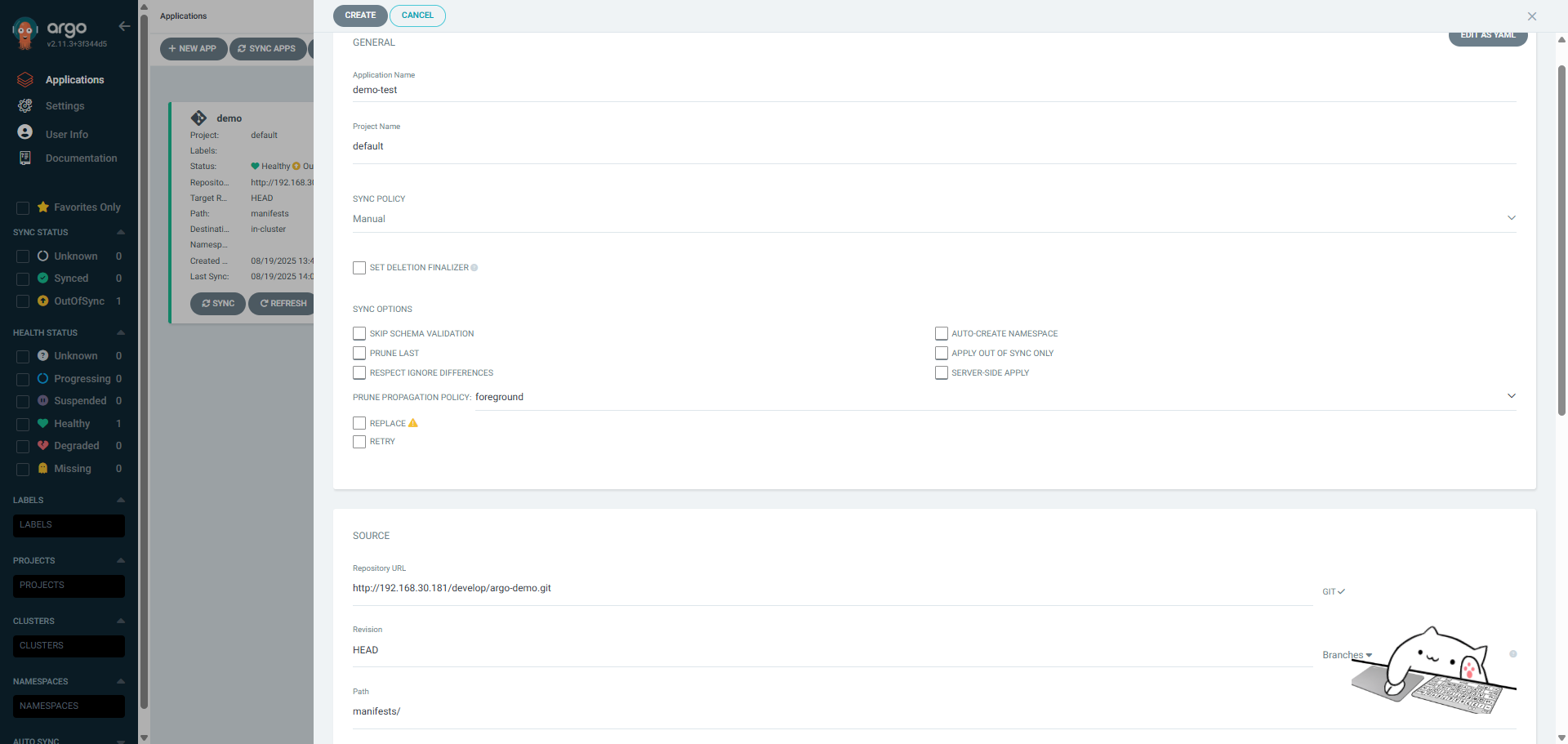

Helm App创建 一、gitlab仓库配置 1.1克隆代码root@k8s-01:~/argocd# cd /opt/ root@k8s-01:/opt# ls cni containerd root@k8s-01:/opt# git clone http://192.168.30.181/develop/argo-demo.git Cloning into 'argo-demo'... Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': remote: Enumerating objects: 19, done. remote: Counting objects: 100% (19/19), done. remote: Compressing objects: 100% (16/16), done. remote: Total 19 (delta 3), reused 0 (delta 0), pack-reused 0 (from 0) Receiving objects: 100% (19/19), 4.49 KiB | 1.12 MiB/s, done. Resolving deltas: 100% (3/3), done. root@k8s-01:/opt# cd argo-demo/ root@k8s-01:/opt/argo-demo# ls manifests README.md root@k8s-01:/opt/argo-demo# 1.2创建Helm应用创建一个名为helm的approot@k8s-01:/opt/argo-demo# helm create helm Creating helm root@k8s-01:/opt/argo-demo# ls helm manifests README.md root@k8s-01:/opt/argo-demo# tree helm helm ├── charts ├── Chart.yaml ├── templates │ ├── deployment.yaml │ ├── _helpers.tpl │ ├── hpa.yaml │ ├── ingress.yaml │ ├── NOTES.txt │ ├── serviceaccount.yaml │ ├── service.yaml │ └── tests │ └── test-connection.yaml └── values.yaml 3 directories, 10 files 修改helm配置[root@tiaoban argo-demo]# cd helm/ [root@tiaoban helm]# vim Chart.yaml appVersion: "v1" # 修改默认镜像版本为v1 [root@tiaoban helm]# vim values.yaml image: repository: ikubernetes/myapp # 修改镜像仓库地址helm文件校验root@k8s-01:/opt/argo-demo# helm lint helm ==> Linting helm [INFO] Chart.yaml: icon is recommended 1 chart(s) linted, 0 chart(s) failed 1.3推送代码root@k8s-01:/opt/argo-demo# git add . root@k8s-01:/opt/argo-demo# git commit -m "add helm" Author identity unknown *** Please tell me who you are. Run git config --global user.email "you@example.com" git config --global user.name "Your Name" to set your account's default identity. Omit --global to set the identity only in this repository. fatal: unable to auto-detect email address (got 'root@k8s-01.(none)') root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# git config --global user.email “790731@qq.com” git config --global user.name "axing" root@k8s-01:/opt/argo-demo# git commit -m "add helm" [main ea70765] add helm 11 files changed, 450 insertions(+) create mode 100644 helm/.helmignore create mode 100644 helm/Chart.yaml create mode 100644 helm/templates/NOTES.txt create mode 100644 helm/templates/_helpers.tpl create mode 100644 helm/templates/deployment.yaml create mode 100644 helm/templates/hpa.yaml create mode 100644 helm/templates/ingress.yaml create mode 100644 helm/templates/service.yaml create mode 100644 helm/templates/serviceaccount.yaml create mode 100644 helm/templates/tests/test-connection.yaml create mode 100644 helm/values.yaml root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# git push Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': Enumerating objects: 17, done. Counting objects: 100% (17/17), done. Delta compression using up to 8 threads Compressing objects: 100% (15/15), done. Writing objects: 100% (16/16), 6.00 KiB | 6.00 MiB/s, done. Total 16 (delta 0), reused 0 (delta 0), pack-reused 0 To http://192.168.30.181/develop/argo-demo.git 293d75f..ea70765 main -> main root@k8s-01:/opt/argo-demo# 1.4查看验证二、Argo CD配置 2.1创建helm类型的app通过Argo UI创建app,填写如下信息:2.2查看验证查看argo cd应用信息,已完成部署。登录k8s查看资源[root@tiaoban helm]# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES demo-helm-585b5ddb66-bdbcr 1/1 Running 0 2m38s 10.244.3.31 work3 <none> <none> rockylinux 1/1 Running 13 (140m ago) 13d 10.244.1.7 work1 <none> <none> [root@tiaoban helm]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE demo-helm ClusterIP 10.105.202.171 <none> 80/TCP 2m41s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 279d [root@tiaoban helm]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl demo-helm Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>版本更新测试#修改git仓库文件,模拟版本更新 root@k8s-01:/opt/argo-demo# cd helm/ root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml root@k8s-01:/opt/argo-demo/helm# vi Chart.yaml root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml root@k8s-01:/opt/argo-demo/helm# vi values.yaml root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml # 提交推送至git仓库 root@k8s-01:/opt/argo-demo/helm# git add . root@k8s-01:/opt/argo-demo/helm# git commit -m "update helm v2" [main 59dcb2d] update helm v2 2 files changed, 3 insertions(+), 3 deletions(-) root@k8s-01:/opt/argo-demo/helm# git push Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': Enumerating objects: 9, done. Counting objects: 100% (9/9), done. Delta compression using up to 8 threads Compressing objects: 100% (5/5), done. Writing objects: 100% (5/5), 475 bytes | 475.00 KiB/s, done. Total 5 (delta 3), reused 0 (delta 0), pack-reused 0 To http://192.168.30.181/develop/argo-demo.git ea70765..59dcb2d main -> main root@k8s-01:/opt/argo-demo/helm# 查看argo cd更新记录访问验证[root@tiaoban helm]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl demo-helm Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

Helm App创建 一、gitlab仓库配置 1.1克隆代码root@k8s-01:~/argocd# cd /opt/ root@k8s-01:/opt# ls cni containerd root@k8s-01:/opt# git clone http://192.168.30.181/develop/argo-demo.git Cloning into 'argo-demo'... Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': remote: Enumerating objects: 19, done. remote: Counting objects: 100% (19/19), done. remote: Compressing objects: 100% (16/16), done. remote: Total 19 (delta 3), reused 0 (delta 0), pack-reused 0 (from 0) Receiving objects: 100% (19/19), 4.49 KiB | 1.12 MiB/s, done. Resolving deltas: 100% (3/3), done. root@k8s-01:/opt# cd argo-demo/ root@k8s-01:/opt/argo-demo# ls manifests README.md root@k8s-01:/opt/argo-demo# 1.2创建Helm应用创建一个名为helm的approot@k8s-01:/opt/argo-demo# helm create helm Creating helm root@k8s-01:/opt/argo-demo# ls helm manifests README.md root@k8s-01:/opt/argo-demo# tree helm helm ├── charts ├── Chart.yaml ├── templates │ ├── deployment.yaml │ ├── _helpers.tpl │ ├── hpa.yaml │ ├── ingress.yaml │ ├── NOTES.txt │ ├── serviceaccount.yaml │ ├── service.yaml │ └── tests │ └── test-connection.yaml └── values.yaml 3 directories, 10 files 修改helm配置[root@tiaoban argo-demo]# cd helm/ [root@tiaoban helm]# vim Chart.yaml appVersion: "v1" # 修改默认镜像版本为v1 [root@tiaoban helm]# vim values.yaml image: repository: ikubernetes/myapp # 修改镜像仓库地址helm文件校验root@k8s-01:/opt/argo-demo# helm lint helm ==> Linting helm [INFO] Chart.yaml: icon is recommended 1 chart(s) linted, 0 chart(s) failed 1.3推送代码root@k8s-01:/opt/argo-demo# git add . root@k8s-01:/opt/argo-demo# git commit -m "add helm" Author identity unknown *** Please tell me who you are. Run git config --global user.email "you@example.com" git config --global user.name "Your Name" to set your account's default identity. Omit --global to set the identity only in this repository. fatal: unable to auto-detect email address (got 'root@k8s-01.(none)') root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# git config --global user.email “790731@qq.com” git config --global user.name "axing" root@k8s-01:/opt/argo-demo# git commit -m "add helm" [main ea70765] add helm 11 files changed, 450 insertions(+) create mode 100644 helm/.helmignore create mode 100644 helm/Chart.yaml create mode 100644 helm/templates/NOTES.txt create mode 100644 helm/templates/_helpers.tpl create mode 100644 helm/templates/deployment.yaml create mode 100644 helm/templates/hpa.yaml create mode 100644 helm/templates/ingress.yaml create mode 100644 helm/templates/service.yaml create mode 100644 helm/templates/serviceaccount.yaml create mode 100644 helm/templates/tests/test-connection.yaml create mode 100644 helm/values.yaml root@k8s-01:/opt/argo-demo# root@k8s-01:/opt/argo-demo# git push Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': Enumerating objects: 17, done. Counting objects: 100% (17/17), done. Delta compression using up to 8 threads Compressing objects: 100% (15/15), done. Writing objects: 100% (16/16), 6.00 KiB | 6.00 MiB/s, done. Total 16 (delta 0), reused 0 (delta 0), pack-reused 0 To http://192.168.30.181/develop/argo-demo.git 293d75f..ea70765 main -> main root@k8s-01:/opt/argo-demo# 1.4查看验证二、Argo CD配置 2.1创建helm类型的app通过Argo UI创建app,填写如下信息:2.2查看验证查看argo cd应用信息,已完成部署。登录k8s查看资源[root@tiaoban helm]# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES demo-helm-585b5ddb66-bdbcr 1/1 Running 0 2m38s 10.244.3.31 work3 <none> <none> rockylinux 1/1 Running 13 (140m ago) 13d 10.244.1.7 work1 <none> <none> [root@tiaoban helm]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE demo-helm ClusterIP 10.105.202.171 <none> 80/TCP 2m41s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 279d [root@tiaoban helm]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl demo-helm Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>版本更新测试#修改git仓库文件,模拟版本更新 root@k8s-01:/opt/argo-demo# cd helm/ root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml root@k8s-01:/opt/argo-demo/helm# vi Chart.yaml root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml root@k8s-01:/opt/argo-demo/helm# vi values.yaml root@k8s-01:/opt/argo-demo/helm# ls charts Chart.yaml templates values.yaml # 提交推送至git仓库 root@k8s-01:/opt/argo-demo/helm# git add . root@k8s-01:/opt/argo-demo/helm# git commit -m "update helm v2" [main 59dcb2d] update helm v2 2 files changed, 3 insertions(+), 3 deletions(-) root@k8s-01:/opt/argo-demo/helm# git push Username for 'http://192.168.30.181': root Password for 'http://root@192.168.30.181': Enumerating objects: 9, done. Counting objects: 100% (9/9), done. Delta compression using up to 8 threads Compressing objects: 100% (5/5), done. Writing objects: 100% (5/5), 475 bytes | 475.00 KiB/s, done. Total 5 (delta 3), reused 0 (delta 0), pack-reused 0 To http://192.168.30.181/develop/argo-demo.git ea70765..59dcb2d main -> main root@k8s-01:/opt/argo-demo/helm# 查看argo cd更新记录访问验证[root@tiaoban helm]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl demo-helm Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a> -

Directory APP创建与配置 一、APP创建 1.1webUI创建1.2CLI创建除了使用webUI创建应用外,也可以使用Argo CLI命令行工具创建# 创建应用 root@k8s-01:~/argocd# argocd app create demo1 \ --repo http://192.168.30.181/develop/argo-demo.git \ --path manifests/ --sync-policy automatic --dest-namespace default \ --dest-server https://kubernetes.default.svc --directory-recurse WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. application 'demo1' created root@k8s-01:~/argocd# # 查看应用列表 root@k8s-01:~/argocd# argocd app list WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET argocd/demo https://kubernetes.default.svc default OutOfSync Progressing Manual SharedResourceWarning(3) http://192.168.30.181/develop/argo-demo.git manifests HEAD argocd/demo-test https://kubernetes.default.svc default OutOfSync Healthy Manual SharedResourceWarning(3) http://192.168.30.181/develop/argo-demo.git manifests/ HEAD argocd/demo1 https://kubernetes.default.svc default default Synced Healthy Auto <none> http://192.168.30.181/develop/argo-demo.git manifests/ # 查看应用状态 root@k8s-01:~/argocd# kubectl get application -n argocd NAME SYNC STATUS HEALTH STATUS demo OutOfSync Progressing demo-test OutOfSync Healthy demo1 Synced Healthy # 执行立即同步操作 root@k8s-01:~/argocd# argocd app sync argocd/demo WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. TIMESTAMP GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE 2025-08-19T07:00:05+00:00 Service default myapp OutOfSync Healthy 2025-08-19T07:00:05+00:00 apps Deployment default myapp OutOfSync Healthy 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp OutOfSync 2025-08-19T07:00:05+00:00 Service default myapp Synced Healthy 2025-08-19T07:00:05+00:00 Service default myapp Synced Healthy service/myapp configured 2025-08-19T07:00:05+00:00 apps Deployment default myapp OutOfSync Healthy deployment.apps/myapp configured 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp OutOfSync ingressroute.traefik.io/myapp configured 2025-08-19T07:00:05+00:00 apps Deployment default myapp Synced Healthy deployment.apps/myapp configured 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp Synced ingressroute.traefik.io/myapp configured Name: argocd/demo Project: default Server: https://kubernetes.default.svc Namespace: URL: https://argocd.local.com:30443/applications/argocd/demo Source: - Repo: http://192.168.30.181/develop/argo-demo.git Target: HEAD Path: manifests SyncWindow: Sync Allowed Sync Policy: Manual Sync Status: Synced to HEAD (293d75f) Health Status: Healthy Operation: Sync Sync Revision: 293d75f441403c3f19c888df50939ec3a9e6f1fa Phase: Succeeded Start: 2025-08-19 07:00:05 +0000 UTC Finished: 2025-08-19 07:00:05 +0000 UTC Duration: 0s Message: successfully synced (all tasks run) GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE Service default myapp Synced Healthy service/myapp configured apps Deployment default myapp Synced Healthy deployment.apps/myapp configured traefik.io IngressRoute default myapp Synced ingressroute.traefik.io/myapp configured1.3yaml文件创建[root@tiaoban ~]# cat demo.yaml apiVersion: argoproj.io/v1alpha1 kind: Application metadata: name: demo namespace: argocd spec: destination: namespace: default server: 'https://kubernetes.default.svc' source: path: manifests # yaml资源清单路径 repoURL: 'http://gitlab.local.com/devops/argo-demo.git' # 同步仓库地址 targetRevision: 'master' # 分支名称 sources: [] project: default syncPolicy: automated: prune: false selfHeal: false [root@tiaoban ~]# kubectl apply -f demo.yaml application.argoproj.io/demo created二、应用同步选项 2.1同步策略配置SYNC POLICY:同步策略 Argo CD能够在检测到 Git 中所需的清单与集群中的实时状态之间存在差异时自动同步应用程序。自动同步是GitOps Pull模式的核心,好处是 CI/CD Pipeline 不再需要直接访问Argo CD API服务器来执行部署,可以通过在WEB UI的Application-SYNC POLICY中启用AUTOMATED或CLIargocd app set <APPNAME> --sync-policy automated 进行配置。PRUNE RESOURCES :自动删除资源,开启选项后Git Repo中删除资源会自动在环境中删除对应的资源。SELF HEAL:自动痊愈,强制以GitRepo状态为准,手动在环境修改不会生效。2.2AutoSync自动同步默认同步周期是180s, 可以修改argocd-cm配置文件,添加timeout.reconciliation参数。同步流程: 1. 获取所有设置为auto-sync的apps 2. 从每个app的git存储库中获取最新状态 3. 将git状态与集群应用状态对比 4. 如果相同,不执行任何操作并标记为synced 5. 如果不同,标记为out-of-sync2.3SyncOptions同步选项- Validate=false:禁用Kubectl验证 - Replace=true:kubectl replace替换 - PrunePropagationPolicy=background:级联删除策略(background, foreground and orphan.)ApplyOutOfSyncOnly=true:仅同步不同步状态的资源。避免大量对象时资源API消耗 - CreateNamespace=true:创建namespace - PruneLast=true:同步后进行修剪 - RespectlgnoreDifferences=true:支持忽略差异配置(ignoreDifferences:) - ServerSideApply=true:部署操作在服务端运行(避免文件过大)三、应用状态 sync status - Synced:已同步 - OutOfSync:未同步 health status - Progressing:正在执行 - Suspended:资源挂载暂停 - Healthy:资源健康 - Degraded:资源故障 - Missing:集群不存在资源

Directory APP创建与配置 一、APP创建 1.1webUI创建1.2CLI创建除了使用webUI创建应用外,也可以使用Argo CLI命令行工具创建# 创建应用 root@k8s-01:~/argocd# argocd app create demo1 \ --repo http://192.168.30.181/develop/argo-demo.git \ --path manifests/ --sync-policy automatic --dest-namespace default \ --dest-server https://kubernetes.default.svc --directory-recurse WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. application 'demo1' created root@k8s-01:~/argocd# # 查看应用列表 root@k8s-01:~/argocd# argocd app list WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET argocd/demo https://kubernetes.default.svc default OutOfSync Progressing Manual SharedResourceWarning(3) http://192.168.30.181/develop/argo-demo.git manifests HEAD argocd/demo-test https://kubernetes.default.svc default OutOfSync Healthy Manual SharedResourceWarning(3) http://192.168.30.181/develop/argo-demo.git manifests/ HEAD argocd/demo1 https://kubernetes.default.svc default default Synced Healthy Auto <none> http://192.168.30.181/develop/argo-demo.git manifests/ # 查看应用状态 root@k8s-01:~/argocd# kubectl get application -n argocd NAME SYNC STATUS HEALTH STATUS demo OutOfSync Progressing demo-test OutOfSync Healthy demo1 Synced Healthy # 执行立即同步操作 root@k8s-01:~/argocd# argocd app sync argocd/demo WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. TIMESTAMP GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE 2025-08-19T07:00:05+00:00 Service default myapp OutOfSync Healthy 2025-08-19T07:00:05+00:00 apps Deployment default myapp OutOfSync Healthy 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp OutOfSync 2025-08-19T07:00:05+00:00 Service default myapp Synced Healthy 2025-08-19T07:00:05+00:00 Service default myapp Synced Healthy service/myapp configured 2025-08-19T07:00:05+00:00 apps Deployment default myapp OutOfSync Healthy deployment.apps/myapp configured 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp OutOfSync ingressroute.traefik.io/myapp configured 2025-08-19T07:00:05+00:00 apps Deployment default myapp Synced Healthy deployment.apps/myapp configured 2025-08-19T07:00:05+00:00 traefik.io IngressRoute default myapp Synced ingressroute.traefik.io/myapp configured Name: argocd/demo Project: default Server: https://kubernetes.default.svc Namespace: URL: https://argocd.local.com:30443/applications/argocd/demo Source: - Repo: http://192.168.30.181/develop/argo-demo.git Target: HEAD Path: manifests SyncWindow: Sync Allowed Sync Policy: Manual Sync Status: Synced to HEAD (293d75f) Health Status: Healthy Operation: Sync Sync Revision: 293d75f441403c3f19c888df50939ec3a9e6f1fa Phase: Succeeded Start: 2025-08-19 07:00:05 +0000 UTC Finished: 2025-08-19 07:00:05 +0000 UTC Duration: 0s Message: successfully synced (all tasks run) GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE Service default myapp Synced Healthy service/myapp configured apps Deployment default myapp Synced Healthy deployment.apps/myapp configured traefik.io IngressRoute default myapp Synced ingressroute.traefik.io/myapp configured1.3yaml文件创建[root@tiaoban ~]# cat demo.yaml apiVersion: argoproj.io/v1alpha1 kind: Application metadata: name: demo namespace: argocd spec: destination: namespace: default server: 'https://kubernetes.default.svc' source: path: manifests # yaml资源清单路径 repoURL: 'http://gitlab.local.com/devops/argo-demo.git' # 同步仓库地址 targetRevision: 'master' # 分支名称 sources: [] project: default syncPolicy: automated: prune: false selfHeal: false [root@tiaoban ~]# kubectl apply -f demo.yaml application.argoproj.io/demo created二、应用同步选项 2.1同步策略配置SYNC POLICY:同步策略 Argo CD能够在检测到 Git 中所需的清单与集群中的实时状态之间存在差异时自动同步应用程序。自动同步是GitOps Pull模式的核心,好处是 CI/CD Pipeline 不再需要直接访问Argo CD API服务器来执行部署,可以通过在WEB UI的Application-SYNC POLICY中启用AUTOMATED或CLIargocd app set <APPNAME> --sync-policy automated 进行配置。PRUNE RESOURCES :自动删除资源,开启选项后Git Repo中删除资源会自动在环境中删除对应的资源。SELF HEAL:自动痊愈,强制以GitRepo状态为准,手动在环境修改不会生效。2.2AutoSync自动同步默认同步周期是180s, 可以修改argocd-cm配置文件,添加timeout.reconciliation参数。同步流程: 1. 获取所有设置为auto-sync的apps 2. 从每个app的git存储库中获取最新状态 3. 将git状态与集群应用状态对比 4. 如果相同,不执行任何操作并标记为synced 5. 如果不同,标记为out-of-sync2.3SyncOptions同步选项- Validate=false:禁用Kubectl验证 - Replace=true:kubectl replace替换 - PrunePropagationPolicy=background:级联删除策略(background, foreground and orphan.)ApplyOutOfSyncOnly=true:仅同步不同步状态的资源。避免大量对象时资源API消耗 - CreateNamespace=true:创建namespace - PruneLast=true:同步后进行修剪 - RespectlgnoreDifferences=true:支持忽略差异配置(ignoreDifferences:) - ServerSideApply=true:部署操作在服务端运行(避免文件过大)三、应用状态 sync status - Synced:已同步 - OutOfSync:未同步 health status - Progressing:正在执行 - Suspended:资源挂载暂停 - Healthy:资源健康 - Degraded:资源故障 - Missing:集群不存在资源 -

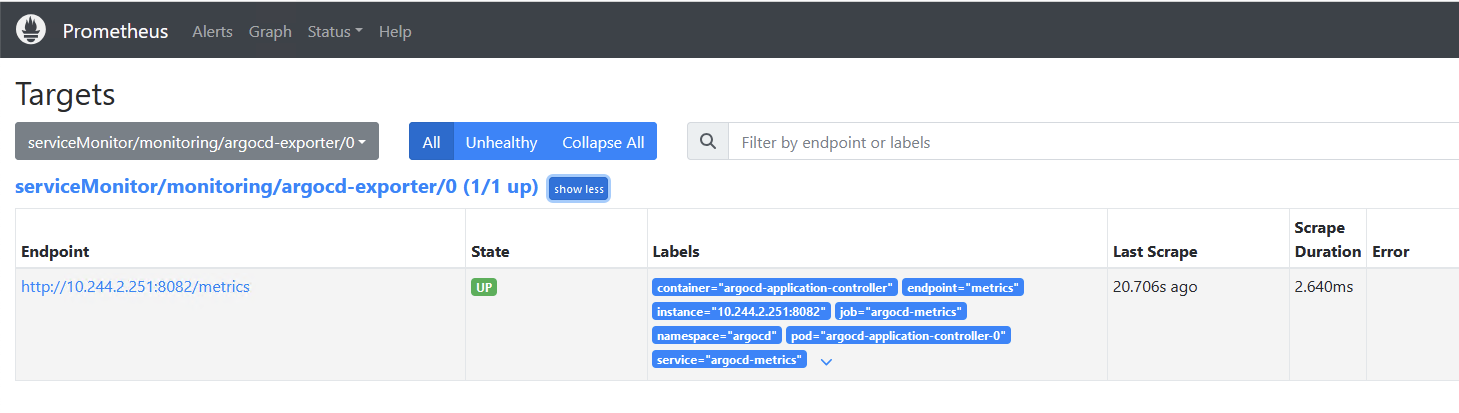

ArgoCD监控 参考文档:https://argo-cd.readthedocs.io/en/stable/operator-manual/metrics/一、配置targets 1.1查看metrics信息[root@tiaoban ~]# kubectl get svc -n argocd NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE argocd-applicationset-controller ClusterIP 10.97.81.94 <none> 7000/TCP,8080/TCP 27d argocd-dex-server ClusterIP 10.106.72.83 <none> 5556/TCP,5557/TCP,5558/TCP 27d argocd-metrics ClusterIP 10.103.26.87 <none> 8082/TCP 27d argocd-notifications-controller-metrics ClusterIP 10.105.181.100 <none> 9001/TCP 27d argocd-redis ClusterIP 10.100.131.134 <none> 6379/TCP 27d argocd-repo-server ClusterIP 10.100.123.80 <none> 8081/TCP,8084/TCP 27d argocd-server NodePort 10.106.11.146 <none> 80:30701/TCP,443:30483/TCP 27d argocd-server-metrics ClusterIP 10.105.164.150 <none> 8083/TCP 27d [root@tiaoban ~]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl argocd-metrics.argocd.svc:8082/metrics # HELP argocd_app_info Information about application. # TYPE argocd_app_info gauge argocd_app_info{autosync_enabled="true",dest_namespace="default",dest_server="https://kubernetes.default.svc",health_status="Healthy",name="blue-green",namespace="argocd",operation="",project="default",repo="http://gitlab.local.com/devops/argo-demo",sync_status="Synced"} 1 # HELP argocd_app_reconcile Application reconciliation performance. # TYPE argocd_app_reconcile histogram argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="0.25"} 12 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="0.5"} 18 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="1"} 21 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="2"} 21 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="4"} 22 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="8"} 241.2创建ServiceMonitor资源apiVersion: monitoring.coreos.com/v1 kind: ServiceMonitor metadata: name: argocd-exporter # ServiceMonitor名称 namespace: monitoring # ServiceMonitor所在名称空间 spec: jobLabel: argocd-exporter # job名称 endpoints: # prometheus所采集Metrics地址配置,endpoints为一个数组,可以创建多个,但是每个endpoints包含三个字段interval、path、port - port: metrics # prometheus采集数据的端口,这里为port的name,主要是通过spec.selector中选择对应的svc,在选中的svc中匹配该端口 interval: 30s # prometheus采集数据的周期,单位为秒 scheme: http # 协议 path: /metrics # prometheus采集数据的路径 selector: # svc标签选择器 matchLabels: app.kubernetes.io/name: argocd-metrics namespaceSelector: # namespace选择 matchNames: - argocd1.3验证targets二、grafana查看数据 2.1导入dashboard参考文档:https://grafana.com/grafana/dashboards/14584-argocd/2.2查看数据

ArgoCD监控 参考文档:https://argo-cd.readthedocs.io/en/stable/operator-manual/metrics/一、配置targets 1.1查看metrics信息[root@tiaoban ~]# kubectl get svc -n argocd NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE argocd-applicationset-controller ClusterIP 10.97.81.94 <none> 7000/TCP,8080/TCP 27d argocd-dex-server ClusterIP 10.106.72.83 <none> 5556/TCP,5557/TCP,5558/TCP 27d argocd-metrics ClusterIP 10.103.26.87 <none> 8082/TCP 27d argocd-notifications-controller-metrics ClusterIP 10.105.181.100 <none> 9001/TCP 27d argocd-redis ClusterIP 10.100.131.134 <none> 6379/TCP 27d argocd-repo-server ClusterIP 10.100.123.80 <none> 8081/TCP,8084/TCP 27d argocd-server NodePort 10.106.11.146 <none> 80:30701/TCP,443:30483/TCP 27d argocd-server-metrics ClusterIP 10.105.164.150 <none> 8083/TCP 27d [root@tiaoban ~]# kubectl exec -it rockylinux -- bash [root@rockylinux /]# curl argocd-metrics.argocd.svc:8082/metrics # HELP argocd_app_info Information about application. # TYPE argocd_app_info gauge argocd_app_info{autosync_enabled="true",dest_namespace="default",dest_server="https://kubernetes.default.svc",health_status="Healthy",name="blue-green",namespace="argocd",operation="",project="default",repo="http://gitlab.local.com/devops/argo-demo",sync_status="Synced"} 1 # HELP argocd_app_reconcile Application reconciliation performance. # TYPE argocd_app_reconcile histogram argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="0.25"} 12 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="0.5"} 18 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="1"} 21 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="2"} 21 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="4"} 22 argocd_app_reconcile_bucket{dest_server="https://kubernetes.default.svc",namespace="argocd",le="8"} 241.2创建ServiceMonitor资源apiVersion: monitoring.coreos.com/v1 kind: ServiceMonitor metadata: name: argocd-exporter # ServiceMonitor名称 namespace: monitoring # ServiceMonitor所在名称空间 spec: jobLabel: argocd-exporter # job名称 endpoints: # prometheus所采集Metrics地址配置,endpoints为一个数组,可以创建多个,但是每个endpoints包含三个字段interval、path、port - port: metrics # prometheus采集数据的端口,这里为port的name,主要是通过spec.selector中选择对应的svc,在选中的svc中匹配该端口 interval: 30s # prometheus采集数据的周期,单位为秒 scheme: http # 协议 path: /metrics # prometheus采集数据的路径 selector: # svc标签选择器 matchLabels: app.kubernetes.io/name: argocd-metrics namespaceSelector: # namespace选择 matchNames: - argocd1.3验证targets二、grafana查看数据 2.1导入dashboard参考文档:https://grafana.com/grafana/dashboards/14584-argocd/2.2查看数据 -

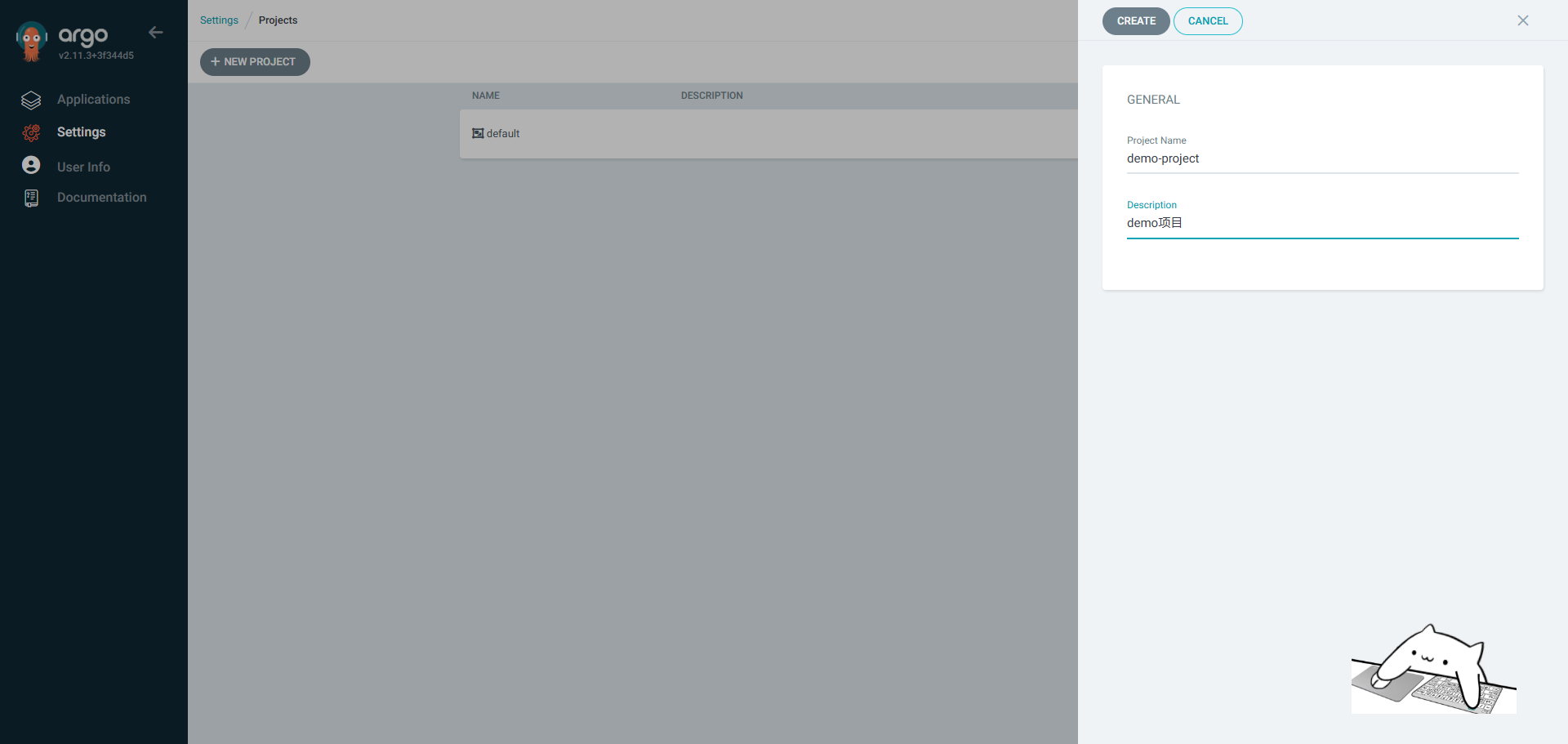

ArgoCD project 一、Project创建通过项目,可以配置对应用程序的访问控制策略。例如,可以指定哪些用户或团队有权在特定命名空间或集群中进行部署操作。提供了资源隔离的功能,确保不同项目之间的资源不会互相干扰。这有助于维护不同团队或应用程序之间的清晰界限。 最佳实践应该是为每个gitlab group在argoCD中创建对应的Project,便于各个组之间权限资源相互隔离。1.1webUI创建1.2CLI创建## argocd CLI # login argocd login argocd.idevops.site # list argocd proj list # remove argocd proj remove dev1 # create argocd proj create --help argocd proj create dev2 argocd proj list argocd proj add-source dev2 http://github.com/dev2/app.git1.3yaml创建示例文档: https://argo-cd.readthedocs.io/en/stable/operator-manual/project.yamlapiVersion: argoproj.io/v1alpha1 kind: AppProject metadata: name: dev3 namespace: argocd finalizers: - resources-finalizer.argocd.argoproj.io spec: description: Example Project sourceRepos: - 'https://github.com/dev3/app.git' destinations: - namespace: dev3 server: https://kubernetes.default.svc name: in-cluster # Deny all cluster-scoped resources from being created, except for Namespace clusterResourceWhitelist: - group: '' kind: Namespace # Allow all namespaced-scoped resources to be created, except for ResourceQuota, LimitRange, NetworkPolicy namespaceResourceBlacklist: - group: '' kind: ResourceQuota - group: '' kind: LimitRange - group: '' kind: NetworkPolicy # Deny all namespaced-scoped resources from being created, except for Deployment and StatefulSet namespaceResourceWhitelist: - group: 'apps' kind: Deployment - group: 'apps' kind: StatefulSet二、project配置 2.1webUI配置2.2yaml配置apiVersion: argoproj.io/v1alpha1 kind: AppProject metadata: name: dev1 namespace: argocd spec: clusterResourceBlacklist: - group: "" kind: "" clusterResourceWhitelist: - group: "" kind: Namespace description: dev1 group destinations: - name: in-cluster namespace: dev1 server: https://kubernetes.default.svc namespaceResourceWhitelist: - group: '*' kind: '*' roles: - jwtTokens: - iat: 1684030305 id: 12764563-0582-4d2d-afbc-ab2712c5c47e name: dev1-role policies: - p, proj:dev1:dev1-role, applications, get, dev1/*, allow - p, proj:dev1:dev1-role, applications, sync, dev1/*, allow - p, proj:dev1:dev1-role, applications, delete, dev1/*, deny sourceRepos: - http://gitlab.local.com/devops/** ## 根据项目组配置,允许该组下的所有repo - ""三、ProjectRoleProjectRole 是一种用于定义在特定项目 (Project) 范围内的访问控制策略的资源。它允许你对项目中的资源进行细粒度的权限管理,指定哪些用户或服务账户可以执行哪些操作。ProjectRole 主要用于增强安全性和隔离性,确保只有被授权的用户或系统组件可以对项目内的应用程序和资源进行特定操作。3.1创建role我们在demo项目下创建名为dev的角色,配置权限为:允许get sync操作权限,不允许delete操作。3.2创建JWT Tokenroot@k8s-01:~/argocd# argocd proj role create-token demo-project dev-role WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. Create token succeeded for proj:demo-project:dev-role. ID: 9c150b55-848f-436c-88db-fe61e95874fc Issued At: 2025-08-19T06:31:59Z Expires At: Never Token: eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTc1NTU4NTExOSwiaWF0IjoxNzU1NTg1MTE5LCJqdGkiOiI5YzE1MGI1NS04NDhmLTQzNmMtODhkYi1mZTYxZTk1ODc0ZmMifQ.54fvz4OOOIo-wsK_hwclCmW0oSIJO1vz2Xgv4Axl08s3.3验证测试# 注销之前登录的admin账号 [root@tiaoban ~]# argocd logout argocd.local.com Logged out from 'argocd.local.com' # 使用token查看app列表 [root@tiaoban ~]# argocd app list --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET argocd/demo https://kubernetes.default.svc demo-project Synced Healthy Auto <none> http://gitlab.local.com/devops/argo-demo.git manifests HEAD # 使用token执行sync操作 [root@tiaoban ~]# argocd app sync argocd/demo --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. TIMESTAMP GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE 2024-06-23T12:20:07+08:00 Service default myapp Synced Healthy 2024-06-23T12:20:07+08:00 apps Deployment default myapp Synced Healthy 2024-06-23T12:20:07+08:00 traefik.containo.us IngressRoute default myapp Synced 2024-06-23T12:20:07+08:00 traefik.containo.us IngressRoute default myapp Synced ingressroute.traefik.containo.us/myapp unchanged 2024-06-23T12:20:07+08:00 Service default myapp Synced Healthy service/myapp unchanged 2024-06-23T12:20:07+08:00 apps Deployment default myapp Synced Healthy deployment.apps/myapp unchanged Name: argocd/demo Project: demo-project Server: https://kubernetes.default.svc Namespace: URL: https://argocd.local.com/applications/argocd/demo Source: - Repo: http://gitlab.local.com/devops/argo-demo.git Target: HEAD Path: manifests SyncWindow: Sync Allowed Sync Policy: Automated Sync Status: Synced to HEAD (0ea8019) Health Status: Healthy Operation: Sync Sync Revision: 0ea801988a54f0ad73808454f2fce5030d3e28ef Phase: Succeeded Start: 2024-06-23 12:20:07 +0800 CST Finished: 2024-06-23 12:20:07 +0800 CST Duration: 0s Message: successfully synced (all tasks run) GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE Service default myapp Synced Healthy service/myapp unchanged apps Deployment default myapp Synced Healthy deployment.apps/myapp unchanged traefik.containo.us IngressRoute default myapp Synced ingressroute.traefik.containo.us/myapp unchanged # 使用token删除应用,提示权限拒绝 [root@tiaoban ~]# argocd app delete argocd/demo --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. Are you sure you want to delete 'argocd/demo' and all its resources? [y/n] y FATA[0001] rpc error: code = PermissionDenied desc = permission denied: applications, delete, demo-project/demo, sub: proj:demo-project:dev-role, iat: 2024-06-23T04:12:29Z

ArgoCD project 一、Project创建通过项目,可以配置对应用程序的访问控制策略。例如,可以指定哪些用户或团队有权在特定命名空间或集群中进行部署操作。提供了资源隔离的功能,确保不同项目之间的资源不会互相干扰。这有助于维护不同团队或应用程序之间的清晰界限。 最佳实践应该是为每个gitlab group在argoCD中创建对应的Project,便于各个组之间权限资源相互隔离。1.1webUI创建1.2CLI创建## argocd CLI # login argocd login argocd.idevops.site # list argocd proj list # remove argocd proj remove dev1 # create argocd proj create --help argocd proj create dev2 argocd proj list argocd proj add-source dev2 http://github.com/dev2/app.git1.3yaml创建示例文档: https://argo-cd.readthedocs.io/en/stable/operator-manual/project.yamlapiVersion: argoproj.io/v1alpha1 kind: AppProject metadata: name: dev3 namespace: argocd finalizers: - resources-finalizer.argocd.argoproj.io spec: description: Example Project sourceRepos: - 'https://github.com/dev3/app.git' destinations: - namespace: dev3 server: https://kubernetes.default.svc name: in-cluster # Deny all cluster-scoped resources from being created, except for Namespace clusterResourceWhitelist: - group: '' kind: Namespace # Allow all namespaced-scoped resources to be created, except for ResourceQuota, LimitRange, NetworkPolicy namespaceResourceBlacklist: - group: '' kind: ResourceQuota - group: '' kind: LimitRange - group: '' kind: NetworkPolicy # Deny all namespaced-scoped resources from being created, except for Deployment and StatefulSet namespaceResourceWhitelist: - group: 'apps' kind: Deployment - group: 'apps' kind: StatefulSet二、project配置 2.1webUI配置2.2yaml配置apiVersion: argoproj.io/v1alpha1 kind: AppProject metadata: name: dev1 namespace: argocd spec: clusterResourceBlacklist: - group: "" kind: "" clusterResourceWhitelist: - group: "" kind: Namespace description: dev1 group destinations: - name: in-cluster namespace: dev1 server: https://kubernetes.default.svc namespaceResourceWhitelist: - group: '*' kind: '*' roles: - jwtTokens: - iat: 1684030305 id: 12764563-0582-4d2d-afbc-ab2712c5c47e name: dev1-role policies: - p, proj:dev1:dev1-role, applications, get, dev1/*, allow - p, proj:dev1:dev1-role, applications, sync, dev1/*, allow - p, proj:dev1:dev1-role, applications, delete, dev1/*, deny sourceRepos: - http://gitlab.local.com/devops/** ## 根据项目组配置,允许该组下的所有repo - ""三、ProjectRoleProjectRole 是一种用于定义在特定项目 (Project) 范围内的访问控制策略的资源。它允许你对项目中的资源进行细粒度的权限管理,指定哪些用户或服务账户可以执行哪些操作。ProjectRole 主要用于增强安全性和隔离性,确保只有被授权的用户或系统组件可以对项目内的应用程序和资源进行特定操作。3.1创建role我们在demo项目下创建名为dev的角色,配置权限为:允许get sync操作权限,不允许delete操作。3.2创建JWT Tokenroot@k8s-01:~/argocd# argocd proj role create-token demo-project dev-role WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. Create token succeeded for proj:demo-project:dev-role. ID: 9c150b55-848f-436c-88db-fe61e95874fc Issued At: 2025-08-19T06:31:59Z Expires At: Never Token: eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTc1NTU4NTExOSwiaWF0IjoxNzU1NTg1MTE5LCJqdGkiOiI5YzE1MGI1NS04NDhmLTQzNmMtODhkYi1mZTYxZTk1ODc0ZmMifQ.54fvz4OOOIo-wsK_hwclCmW0oSIJO1vz2Xgv4Axl08s3.3验证测试# 注销之前登录的admin账号 [root@tiaoban ~]# argocd logout argocd.local.com Logged out from 'argocd.local.com' # 使用token查看app列表 [root@tiaoban ~]# argocd app list --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET argocd/demo https://kubernetes.default.svc demo-project Synced Healthy Auto <none> http://gitlab.local.com/devops/argo-demo.git manifests HEAD # 使用token执行sync操作 [root@tiaoban ~]# argocd app sync argocd/demo --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. TIMESTAMP GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE 2024-06-23T12:20:07+08:00 Service default myapp Synced Healthy 2024-06-23T12:20:07+08:00 apps Deployment default myapp Synced Healthy 2024-06-23T12:20:07+08:00 traefik.containo.us IngressRoute default myapp Synced 2024-06-23T12:20:07+08:00 traefik.containo.us IngressRoute default myapp Synced ingressroute.traefik.containo.us/myapp unchanged 2024-06-23T12:20:07+08:00 Service default myapp Synced Healthy service/myapp unchanged 2024-06-23T12:20:07+08:00 apps Deployment default myapp Synced Healthy deployment.apps/myapp unchanged Name: argocd/demo Project: demo-project Server: https://kubernetes.default.svc Namespace: URL: https://argocd.local.com/applications/argocd/demo Source: - Repo: http://gitlab.local.com/devops/argo-demo.git Target: HEAD Path: manifests SyncWindow: Sync Allowed Sync Policy: Automated Sync Status: Synced to HEAD (0ea8019) Health Status: Healthy Operation: Sync Sync Revision: 0ea801988a54f0ad73808454f2fce5030d3e28ef Phase: Succeeded Start: 2024-06-23 12:20:07 +0800 CST Finished: 2024-06-23 12:20:07 +0800 CST Duration: 0s Message: successfully synced (all tasks run) GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE Service default myapp Synced Healthy service/myapp unchanged apps Deployment default myapp Synced Healthy deployment.apps/myapp unchanged traefik.containo.us IngressRoute default myapp Synced ingressroute.traefik.containo.us/myapp unchanged # 使用token删除应用,提示权限拒绝 [root@tiaoban ~]# argocd app delete argocd/demo --auth-token eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJpc3MiOiJhcmdvY2QiLCJzdWIiOiJwcm9qOmRlbW8tcHJvamVjdDpkZXYtcm9sZSIsIm5iZiI6MTcxOTExNTk0OSwiaWF0IjoxNzE5MTE1OTQ5LCJqdGkiOiI5MDg5OTc0OC1mYjg2LTRlZjktYjNmMC03MWY4MjBjZjEwZDYifQ.RCLx7U-2RdQ_BD5z8sBW3Ghh5RA6DnwU9VHvmU8EgQM WARN[0000] Failed to invoke grpc call. Use flag --grpc-web in grpc calls. To avoid this warning message, use flag --grpc-web. Are you sure you want to delete 'argocd/demo' and all its resources? [y/n] y FATA[0001] rpc error: code = PermissionDenied desc = permission denied: applications, delete, demo-project/demo, sub: proj:demo-project:dev-role, iat: 2024-06-23T04:12:29Z -

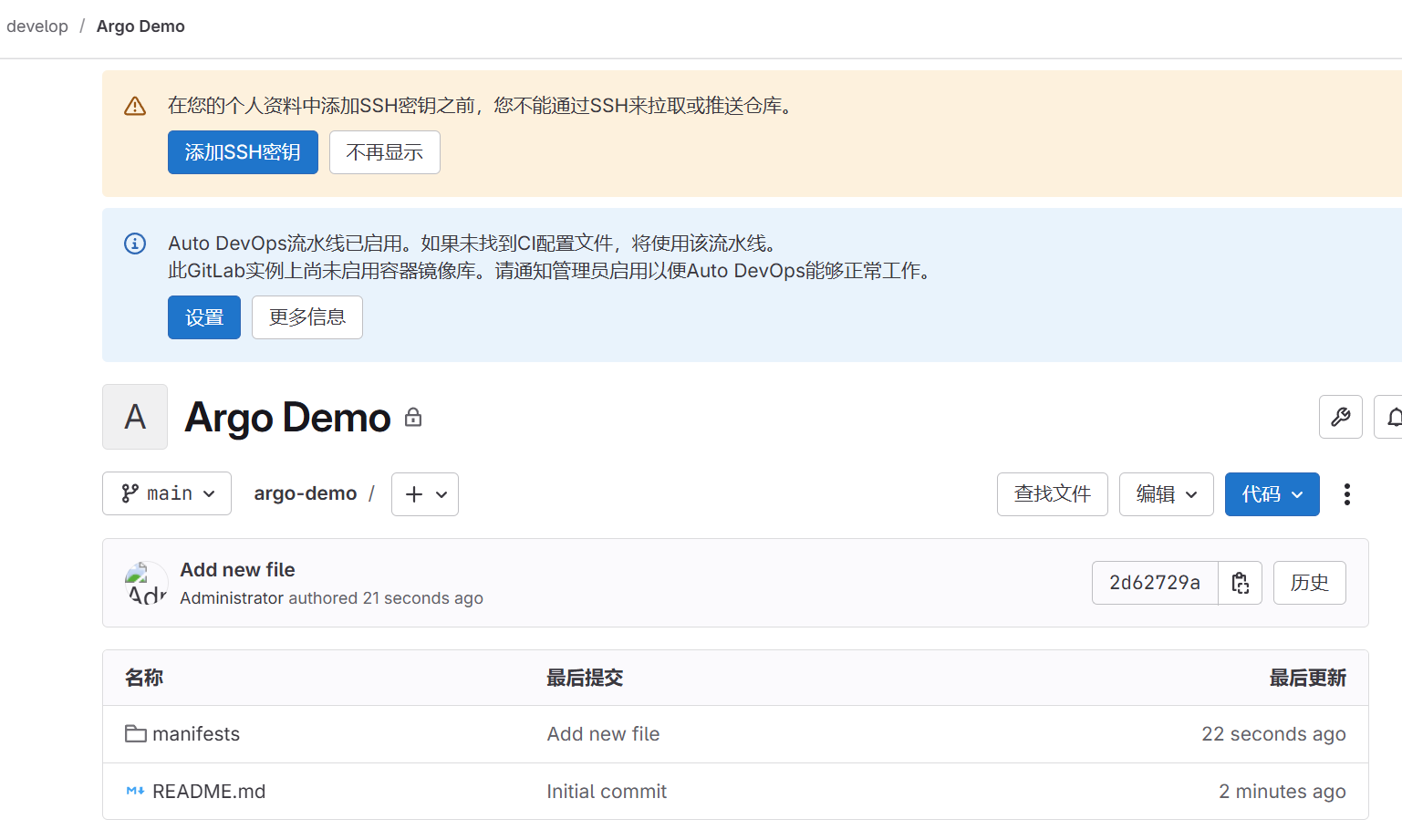

ArgoCD快速体验 一、gitlab仓库配置创建一个名为Argo Demo的仓库,在manifests目录下仅包含应用的yaml文件,文件内容如下apiVersion: apps/v1 kind: Deployment metadata: name: myapp namespace: default spec: selector: matchLabels: app: myapp template: metadata: labels: app: myapp spec: containers: - name: myapp image: ikubernetes/myapp:v1 resources: limits: memory: "128Mi" cpu: "500m" ports: - containerPort: 80 --- apiVersion: v1 kind: Service metadata: name: myapp namespace: default spec: type: ClusterIP selector: app: myapp ports: - port: 80 targetPort: 80 --- apiVersion: traefik.io/v1alpha1 kind: IngressRoute metadata: name: myapp namespace: default spec: entryPoints: - web routes: - match: Host(`myapp.test.com`) kind: Rule services: - name: myapp port: 80 gitlab仓库如下:二、vargocd配置 2.1添加仓库地址添加仓库地址,Settings → Repositories,点击 CONNECT REPO 按钮添加仓库,填写以下信息验证通过后显示如下,点击创建应用创建应用创建完后如下所示三、访问验证 3.1验证应用部署状态查看k8s创建的资源信息,发现已经成功创建了对应的资源root@k8s-01:~/argocd# kubectl get pod NAME READY STATUS RESTARTS AGE myapp-fd4fd598f-kkrck 1/1 Running 0 113s root@k8s-01:~/argocd# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 19d myapp ClusterIP 10.101.17.194 <none> 80/TCP 2m2s root@k8s-01:~/argocd# kubectl get ingressroute NAME AGE myapp 2m13s 访问web页面验证3.2版本更新接下来模拟配置变更,将镜像版本从v1改为v2Argo CD默认每180秒同步一次,查看argocd信息,发现已经自动同步了yaml文件,并且正在进行发布访问web页面状态,发现已经完成了发布工作。此时整个应用关联关系如下3.3版本回退点击history and rollback即可看到整个应用的所有发布记录,并且可以选择指定版本进行回退操作。再次访问发现已经回退到v1版本

ArgoCD快速体验 一、gitlab仓库配置创建一个名为Argo Demo的仓库,在manifests目录下仅包含应用的yaml文件,文件内容如下apiVersion: apps/v1 kind: Deployment metadata: name: myapp namespace: default spec: selector: matchLabels: app: myapp template: metadata: labels: app: myapp spec: containers: - name: myapp image: ikubernetes/myapp:v1 resources: limits: memory: "128Mi" cpu: "500m" ports: - containerPort: 80 --- apiVersion: v1 kind: Service metadata: name: myapp namespace: default spec: type: ClusterIP selector: app: myapp ports: - port: 80 targetPort: 80 --- apiVersion: traefik.io/v1alpha1 kind: IngressRoute metadata: name: myapp namespace: default spec: entryPoints: - web routes: - match: Host(`myapp.test.com`) kind: Rule services: - name: myapp port: 80 gitlab仓库如下:二、vargocd配置 2.1添加仓库地址添加仓库地址,Settings → Repositories,点击 CONNECT REPO 按钮添加仓库,填写以下信息验证通过后显示如下,点击创建应用创建应用创建完后如下所示三、访问验证 3.1验证应用部署状态查看k8s创建的资源信息,发现已经成功创建了对应的资源root@k8s-01:~/argocd# kubectl get pod NAME READY STATUS RESTARTS AGE myapp-fd4fd598f-kkrck 1/1 Running 0 113s root@k8s-01:~/argocd# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 19d myapp ClusterIP 10.101.17.194 <none> 80/TCP 2m2s root@k8s-01:~/argocd# kubectl get ingressroute NAME AGE myapp 2m13s 访问web页面验证3.2版本更新接下来模拟配置变更,将镜像版本从v1改为v2Argo CD默认每180秒同步一次,查看argocd信息,发现已经自动同步了yaml文件,并且正在进行发布访问web页面状态,发现已经完成了发布工作。此时整个应用关联关系如下3.3版本回退点击history and rollback即可看到整个应用的所有发布记录,并且可以选择指定版本进行回退操作。再次访问发现已经回退到v1版本