搜索到

154

篇与

的结果

-

-

-

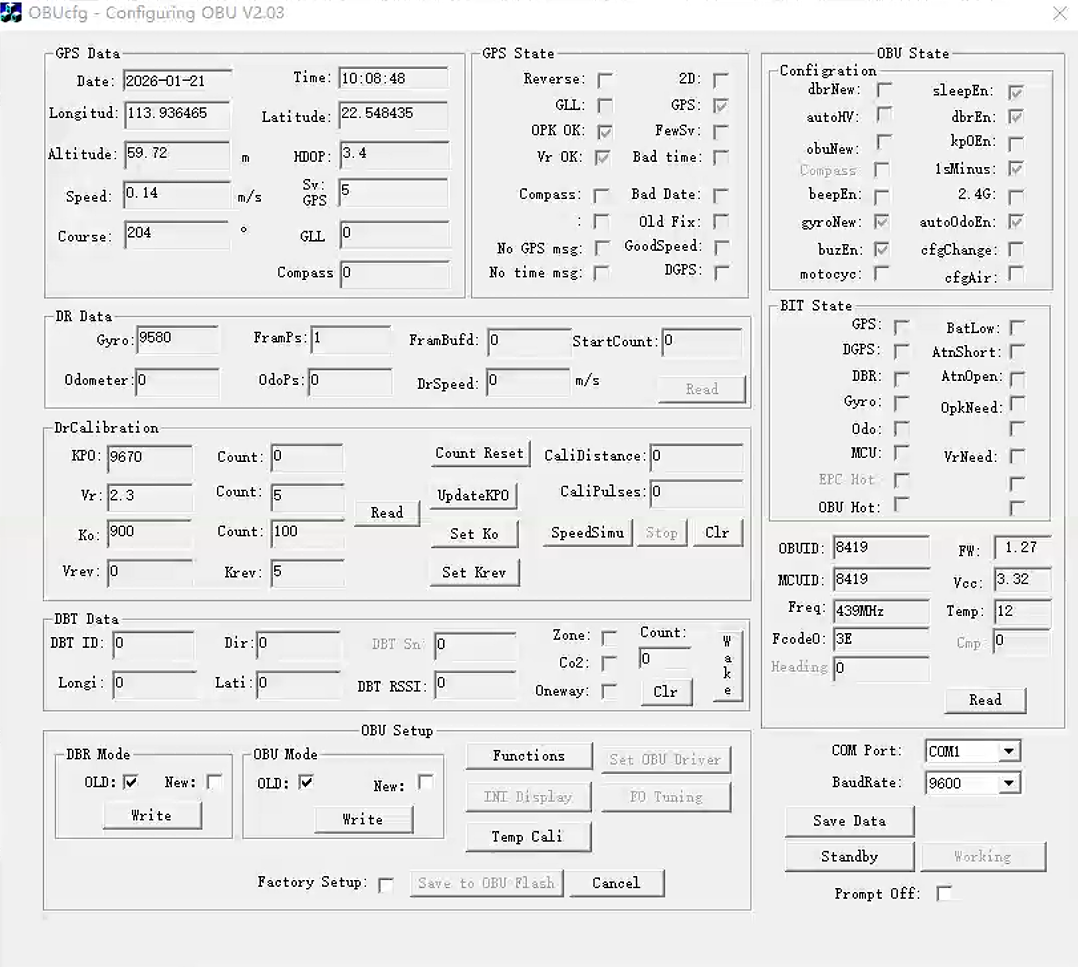

OBU和MDT私有协议数据帧 一、OBU [2026-01-21 10:07:45.968] FF FF 52 00 20 06 26 [2026-01-21 10:07:46.105] FF FF 4B 28 23 64 C6 25 [2026-01-21 10:07:46.113] 00 8C FF FF 56 2E 05 19 [2026-01-21 10:07:46.121] 00 64 2E 28 23 00 40 00 [2026-01-21 10:07:46.130] 00 00 00 33 [2026-01-21 10:07:46.458] FF FF 53 E3 20 00 00 C3 [2026-01-21 10:07:46.467] 01 27 40 2B E3 20 73 00 [2026-01-21 10:07:46.475] 00 00 00 FD 帧:FF FF 52 00 20 06 26 payload = 00 20 06 chk = 00^20^06 = 26 ✅ 这就是典型的 3-byte bitmask(24 个勾选位够用),你“bit 对应 UI 哪个勾”暂时先别钉死,等你再抓几组“勾选变化”的对照包就能反推出来。 帧:FF FF 4B 28 23 64 C6 25 00 8C 映射: Ko_raw = 0x2328 = 9000 → Ko = 9000/10 = 900 KoCount = 0x64 → 100 KPO_raw = 0x25C6 = 9670 KPOCount = 0x00 → 0 chk XOR ✅ 帧:FF FF 56 2E 05 19 00 64 2E 28 23 00 40 00 00 00 00 33 19 00 -> 25°C(当作“温度”) 因为你后面自己也用新截图验证了:UI 的 Temp 变了,但这两个字节不变。 所以它几乎可以排除是“实时温度”。 这帧看起来像“DR 标定快照 + 一些运行状态”,其中: 2E 05 作为 u16le = 0x052E = 1326 → Vcc = 1326/400 = 3.315V ✅ 2E 单字节 → Vr_raw,Vr = 2E/20 = 2.30 ✅ 28 23 → Ko_raw(再次出现)✅ 00(在 Ko 后面那个单字节)非常像 Vrev(UI 里 Vrev=0)✅ 40 很像一个 flag byte(或其中一个 flag byte)✅ 后面一串 00 基本就是保留/对齐/扩展 GPS State(3 字节 flags,高置信是“bitmask”) 完整帧:FF FF 52 00 20 06 26 idx hex 含义 0 FF 帧头 1 FF 帧头 2 52 块类型:GPS State 3 00 GPS 状态 flags[0](bitmask,当前全 0) 4 20 GPS 状态 flags[1](bitmask,bit5=1,高概率对应 UI 的 GPS 勾选) 5 06 GPS 状态 flags[2](bitmask,bit1+bit2=1,高概率对应 OPK OK、Vr OK 这类勾选) 6 26 校验:00 XOR 20 XOR 06 = 26 ✅ 这页 UI checkbox 很多,3 字节=24 个 bit 完全够装。 DrCalibration(Ko / KPO / Count,高置信) 你日志把它拆行了,但应当拼成一帧: 完整帧:FF FF 4B 28 23 64 C6 25 00 8C payload(6 字节):28 23 64 C6 25 00 idx hex 含义(小端) 0 FF 帧头 1 FF 帧头 2 4B 块类型:DrCalibration 3 28 Ko_raw 低字节 4 23 Ko_raw 高字节 → 0x2328 = 9000(UI Ko=900 很像 Ko=Ko_raw/10) 5 64 Ko Count → 0x64 = 100(UI 里 Ko 那行 Count=100) 6 C6 KPO 低字节 7 25 KPO 高字节 → 0x25C6 = 9670(UI KPO=9670) 8 00 KPO Count → 0(UI KPO 那行 Count=0) 9 8C 校验:28^23^64^C6^25^00 = 8C ✅ 完整帧:FF FF 56 2E 05 19 00 64 2E 28 23 00 40 00 00 00 33 payload(13 字节): 2E 05 19 00 64 2E 28 23 00 40 00 00 00 idx hex 目前能确定的 / 可能的含义 0 FF 帧头 1 FF 帧头 2 56 块类型:0x56(未知块,像“配置/快照”) 3 2E 2E 05 → u16le=0x052E=1326 idx3-4 作为一个 u16le:0x052E=1326 得到 1326/400=3.315,非常接近 UI 的 Vcc=3.32 4 05 *候选字段A(见下) 5 19 19 00 → u16le=0x0019=25 25°C 是非常典型的“出厂/室温标定参考点”(很多设备会用一个固定参考温度做补偿/校准参数) 6 00 7 64 很像 Count=100(与 0x4B 的 KoCount 一致) 8 2E idx8 很可能是 Vr_raw,Vr = idx8 / 20 = 2.30 9 28 Ko_raw 低字节 10 23 Ko_raw 高字节 → 0x2328=9000(与 0x4B 完全一致) idx9-10:Ko_raw(/10) 11 00 保留/小字段 12 40 flags 或配置字节(bitmask 的可能性很高) 0x40 = 0100 0000b,看起来就是“只开了某一个 bit”。把多个 checkbox 压进一个字节/整数里(每个 bit 表示一个布尔状态)叫 bitmask/flag register,这是协议/寄存器里非常常见的省空间做法 13 00 保留 14 00 保留 15 00 保留 16 33 校验:XOR(payload)=33 ✅ idx3-4 作为一个 u16le:0x052E=1326 /400 这种比例是很常见的一类“定点电压单位”:把电压用整数表示,每 1 count = 1/400 V = 0.0025 V = 2.5 mV。所以你这帧里如果 idx3-4 取 u16le = 0x052E = 1326,那么 Vcc = 1326 / 400 = 3.315 V idx5 的 0x19=25 可能是温度(25°C),也可能是某种状态码。你截图里温度=12,但那是 10:08:48 时刻;0x56 这帧是 10:07:46,上电瞬间温度/显示刷新不同步很常见。 后续的二进制帧中会每秒更新温度 OBU 基本信息/状态(ID/FW 高置信,其余部分待验证) 完整帧: FF FF 53 E3 20 00 00 C3 01 27 40 2B E3 20 73 00 00 00 00 FD payload(16 字节): E3 20 00 00 C3 01 27 40 2B E3 20 73 00 00 00 00 idx hex 含义 0 FF 帧头 1 FF 帧头 2 53 块类型:OBU State / 基本信息 3 E3 OBUID 低字节 4 20 OBUID 高字节 → 0x20E3=8419(对上 UI 的 OBUID=8419)✅ 5 00 保留/状态 6 00 保留/状态 7 C3 状态/型号/码(待验证) 8 01 FW major = 1 ✅ 9 27 FW minor = 0x27(很像 BCD 27)→ UI 显示 1.27 ✅ 10 40 flags(待验证,可能与右上 Configuration 勾选有关) 11 2B RF/频点索引(待验证;UI Freq=439MHz 很可能由“基频+索引”计算) 12 E3 MCUID 低字节 13 20 MCUID 高字节 → 0x20E3=8419(对上 UI 的 MCUID=8419)✅ 14 73 模式/状态码(你别的日志也出现过 74,像 Standby/Working 或状态切换) 15 00 预留 16 00 预留 17 00 预留 18 00 预留 19 FD 校验:XOR(payload)=FD ✅ [2026-01-21 10:07:47.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:47.019] 17 3B 3B 00 00 00 00 00 [2026-01-21 10:07:47.027] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:47.044] BD 25 00 00 62 09 00 3C [2026-01-21 10:07:47.052] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.069] 00 00 80 32 [2026-01-21 10:07:48.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:48.018] 17 3B 3A 00 00 00 00 00 [2026-01-21 10:07:48.026] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:48.043] 68 25 00 00 A5 0A 0C 3C [2026-01-21 10:07:48.051] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.068] 00 00 87 31 [2026-01-21 10:07:49.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:49.019] 17 3B 39 00 00 00 00 00 [2026-01-21 10:07:49.027] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:49.044] 6B 25 00 00 01 0B 0C 3C [2026-01-21 10:07:49.052] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.069] 00 00 29 31 [2026-01-21 10:07:50.016] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:50.024] 17 3B 38 00 00 00 00 00 [2026-01-21 10:07:50.032] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.041] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:50.049] 6A 25 00 00 2F 0B 0C 3C [2026-01-21 10:07:50.057] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.066] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.074] 00 00 FD 30 [2026-01-21 10:07:51.011] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:51.019] 17 3B 37 00 00 00 00 00 [2026-01-21 10:07:51.028] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.036] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:51.044] 6A 25 00 00 3F 0B 0C 3C [2026-01-21 10:07:51.053] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.061] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.070] 00 00 EE 30 [2026-01-21 10:07:51.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:51.687] 02 07 35 00 00 00 00 00 [2026-01-21 10:07:51.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:51.712] 6A 25 00 00 7C 0B 0C 3C [2026-01-21 10:07:51.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.737] 00 00 C8 64 [2026-01-21 10:07:52.680] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:52.688] 02 07 36 00 00 00 00 00 [2026-01-21 10:07:52.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.705] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:52.713] 6A 25 00 00 9B 0B 0C 3C [2026-01-21 10:07:52.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.730] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.738] 00 00 A8 64 [2026-01-21 10:07:53.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:53.687] 02 07 37 00 00 00 00 00 [2026-01-21 10:07:53.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:53.712] 6A 25 00 00 6D 0B 0C 3C [2026-01-21 10:07:53.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.737] 00 00 D5 64 [2026-01-21 10:07:54.839] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:54.848] 02 07 38 00 00 00 00 00 [2026-01-21 10:07:54.856] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.864] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:54.873] 6A 25 00 00 C9 0B 0C 3C [2026-01-21 10:07:54.881] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.889] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.898] 00 00 78 64 [2026-01-21 10:07:55.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:55.687] 02 07 39 00 00 00 00 00 [2026-01-21 10:07:55.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:55.712] 6A 25 00 00 BA 0B 0C 3C [2026-01-21 10:07:55.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.737] 00 00 86 64 [2026-01-21 10:07:56.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:56.687] 02 07 3A 00 00 00 00 00 [2026-01-21 10:07:56.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:56.712] 69 25 00 00 D8 0B 0C 3C [2026-01-21 10:07:56.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.737] 00 00 68 64 [2026-01-21 10:07:57.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:57.687] 02 07 3B 00 00 00 00 00 [2026-01-21 10:07:57.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:57.712] 6A 25 00 00 E8 0B 0C 3C [2026-01-21 10:07:57.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.737] 00 00 56 64 [2026-01-21 10:07:58.678] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:58.686] 02 08 00 00 00 00 00 00 [2026-01-21 10:07:58.694] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.702] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:58.711] 69 25 00 00 E8 0B 0C 3C [2026-01-21 10:07:58.719] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.728] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.736] 00 00 92 63 [2026-01-21 10:07:59.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:59.687] 02 08 01 00 00 00 00 00 [2026-01-21 10:07:59.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:59.712] 6A 25 00 00 C9 0B 0C 3C [2026-01-21 10:07:59.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.737] 00 00 AF 63 [2026-01-21 10:08:00.780] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:00.788] 02 08 02 00 32 E9 8B FE [2026-01-21 10:08:00.797] 48 04 FC 0A 1F E3 01 00 [2026-01-21 10:08:00.805] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:00.813] 69 25 00 00 C9 0B 0C 3C [2026-01-21 10:08:00.822] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:00.830] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:00.839] 00 00 8E 89 [2026-01-21 10:08:01.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:01.707] 02 08 03 00 EC D8 8B FE [2026-01-21 10:08:01.716] 67 05 FC 0A 48 E3 01 00 [2026-01-21 10:08:01.723] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:01.732] 69 25 00 00 D8 0B 0C 3C [2026-01-21 10:08:01.740] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:01.748] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:01.757] 00 00 7C 98 [2026-01-21 10:08:02.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:02.708] 02 08 04 00 99 C8 8B FE [2026-01-21 10:08:02.716] 87 06 FC 0A 72 E3 01 00 [2026-01-21 10:08:02.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:02.733] 69 25 00 00 F7 0B 0C 3C [2026-01-21 10:08:02.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:02.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:02.758] 00 00 65 A7 [2026-01-21 10:08:03.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:03.707] 02 08 05 00 47 B8 8B FE [2026-01-21 10:08:03.716] A8 07 FC 0A 9C E3 01 00 [2026-01-21 10:08:03.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:03.732] 69 25 00 00 07 0C 0C 3C [2026-01-21 10:08:03.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:03.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:03.758] 00 00 5B B6 [2026-01-21 10:08:04.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:04.707] 02 08 06 00 F4 A7 8B FE [2026-01-21 10:08:04.716] C9 08 FC 0A C5 E3 01 00 [2026-01-21 10:08:04.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:04.734] 6A 25 00 00 F7 0B 0C 3C [2026-01-21 10:08:04.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:04.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:04.757] 00 00 72 C5 [2026-01-21 10:08:05.701] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:05.709] 02 08 07 00 8E 39 90 FE [2026-01-21 10:08:05.718] 64 D0 ED 0A 32 D8 01 00 [2026-01-21 10:08:05.727] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:05.736] 69 25 00 00 35 0C 0C 3C [2026-01-21 10:08:05.745] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:05.754] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:05.761] 00 00 9C 77 [2026-01-21 10:08:06.872] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:06.880] 02 08 08 00 EA D4 88 FE [2026-01-21 10:08:06.889] A2 B9 F9 0A FE EA 01 00 [2026-01-21 10:08:06.899] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:06.905] 6B 25 00 00 35 0C 0C 3C [2026-01-21 10:08:06.913] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:06.921] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:06.930] 00 00 2F E0 [2026-01-21 10:08:07.701] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:07.709] 02 08 09 00 C0 C3 88 FE [2026-01-21 10:08:07.719] AB BA F9 0A 2A EB 01 00 [2026-01-21 10:08:07.728] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:07.737] 69 25 00 00 35 0C 0C 3C [2026-01-21 10:08:07.743] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:07.751] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:07.760] 00 00 25 F0 [2026-01-21 10:08:08.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:08.708] 02 08 0A 00 96 B2 88 FE [2026-01-21 10:08:08.718] B5 BB F9 0A 56 EB 01 00 [2026-01-21 10:08:08.727] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:08.734] 6A 25 00 00 54 0C 0C 3C [2026-01-21 10:08:08.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:08.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:08.758] 00 00 F8 FF [2026-01-21 10:08:09.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:09.707] 02 08 0B 00 6C A1 88 FE [2026-01-21 10:08:09.717] BF BC F9 0A 82 EB 01 00 [2026-01-21 10:08:09.726] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:09.735] 68 25 00 00 54 0C 0C 3C [2026-01-21 10:08:09.744] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:09.751] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:09.757] 00 00 ED 0F [2026-01-21 10:08:10.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:10.707] 02 08 0C 00 42 90 88 FE [2026-01-21 10:08:10.717] C9 BD F9 0A AE EB 01 00 [2026-01-21 10:08:10.726] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:10.735] 69 25 00 00 44 0C 0C 3C [2026-01-21 10:08:10.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:10.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:10.757] 00 00 EF 1F [2026-01-21 10:08:11.697] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:11.705] 02 08 0D 00 19 7F 88 FE [2026-01-21 10:08:11.715] D4 BE F9 0A DA EB 01 00 [2026-01-21 10:08:11.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:11.732] 69 25 00 00 54 0C 0C 3C [2026-01-21 10:08:11.739] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:11.747] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:11.755] 00 00 D0 2F [2026-01-21 10:08:12.871] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:12.879] 02 08 0E 00 F0 6D 88 FE [2026-01-21 10:08:12.888] DF BF F9 0A 06 EC 01 00 [2026-01-21 10:08:12.897] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:12.904] 69 25 00 00 44 0C 0C 3C [2026-01-21 10:08:12.913] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:12.921] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:12.930] 00 00 D1 3F 之前点图中read 发送给OBU的 [2026-01-19 10:05:10.884] FF 89 04 56 56 [2026-01-19 10:05:10.961] FF 89 04 4B 4B [2026-01-19 10:05:11.164] FF 89 04 53 053 字节序号 值 含义 0 FF 帧头 1 89 “Host→OBU 命令”标识(跟 OBU→Host 的 FF FF 不同) 2 04 很像长度/固定功能码(至少在 Read 里固定为 04) 3 xx 命令码:56/4B/53 4 xx 校验(此处=命令码本身) 这个命令的校验很“偷懒”:最后 1 字节直接等于 cmd,所以你看到 56 56 / 4B 4B / 53 53。 OBU回的 [2026-01-19 10:21:37.452] FF FF 56 2E 05 19 00 64 [2026-01-19 10:21:37.461] 2E 28 23 00 40 00 00 00 [2026-01-19 10:21:37.468] 00 33 [2026-01-19 10:21:37.968] FF FF 53 E3 20 00 00 C3 [2026-01-19 10:21:37.976] 01 27 40 2B E3 20 73 00 [2026-01-19 10:21:37.987] 00 00 00 FD [2026-01-19 10:21:38.967] FF FF 4B 28 23 64 C6 25 [2026-01-19 10:21:38.974] 00 8C 点图中read 发送给OBU的 [2026-01-19 10:17:03.590] FF 89 04 53 53 [2026-01-19 10:17:04.591] 24 40 43 3F 0D 0A OBU回的 [2026-01-15 13:39:32.955] FF FF 53 E3 20 00 00 C3 [2026-01-15 13:39:32.964] 01 27 40 2B E3 20 73 00 [2026-01-15 13:39:32.974] 00 00 00 FD [2026-01-15 13:39:35.955] FF FF 53 E3 20 00 00 C3 [2026-01-15 13:39:35.964] 01 27 40 2B E3 20 74 00 [2026-01-15 13:39:35.974] 00 00 00 FA [2026-01-13 14:25:14.972] FF 81 E8 03 0D 01 EA 07 [2026-01-13 14:25:14.980] 06 19 0E 08 FB 6E 58 02 [2026-01-13 14:25:14.992] 0D 40 DA 0B 39 18 01 00 [2026-01-13 14:25:15.000] A6 00 6F 02 CA 00 00 00 [2026-01-13 14:25:15.007] 6F 25 00 00 6C 19 0C 82 [2026-01-13 14:25:15.020] 00 00 00 00 00 00 00 00 [2026-01-13 14:25:15.025] 00 00 00 00 00 00 00 00 [2026-01-13 14:25:15.034] 00 00 BB 3B idx hex dec 归属/解释 0 FF 255 帧头/同步(固定) 1 81 129 帧头/同步(固定) 2 E8 232 帧类型/常量(和所有帧一致) 3 03 3 帧类型/常量(和所有帧一致) 4 0D 13 Day(日=13) 5 01 1 Month(月=1) 6 EA 234 Year (LE) 低字节 7 07 7 Year (LE) 高字节 → 0x07EA=2026 公式:value=7×256+234=2026 8 06 6 Hour(很可能是 UTC 小时) 9 19 25 Minute(25) 10 0E 14 Second(14,后续每帧+1)秒 11 08 8 SvGPS(GPS卫星数=8) 12 FB 251 Latitude u32(LE) byte0 纬度(Latitude)和经度(Longitude) 使用弧度 × 1e8 来存经纬度然后转成度 13 6E 110 Latitude u32(LE) byte1 字节:FB 6E 58 02(byte0→byte3) 小端拼成 32 位数: Hex:0x02586EFB Dec:39,350,011 14 58 88 Latitude u32(LE) byte2 纬度弧度:39,350,011 / 1e8 = 0.39350011 rad 15 02 2 Latitude u32(LE) byte3 转成度:deg = rad × 180 / π 0.39350011 × 180/π ≈ 22.54589554° Latitude ≈ 22.545896° 16 0D 13 Longitude u32(LE) byte0 字节:0D 40 DA 0B Hex:0x0BDA400D Dec:198,852,621 17 40 64 Longitude u32(LE) byte1 经度弧度:198,852,621 / 1e8 = 1.98852621 rad 18 DA 218 Longitude u32(LE) byte2 度:1.98852621 × 180/π ≈ 113.93415928° 19 0B 11 Longitude u32(LE) byte3 Longitude ≈ 113.934159° idx hex dec 归属/解释 20 39 57 Course int16 LE byte0 Course:byte0=0x39,byte1=0x18 = 0x1839 = 24×256 + 57 = 6201 21 18 24 Course int16 LE byte1 Course ≈ 6201 / 100 = 62.01° 22 01 1 FramPs u16(LE) byte0 FramPs:0x01 0x00 = 0x0001 = 1 23 00 0 FramPs u16(LE) byte1 FramPs = b22 + (b23<<8) 这里基本是 1,后面变成 0(看起来像某个状态/计数开关),通常直接显示 raw。 24 A6 166 Altitude u16(LE) byte0 Altitude:0xA6 0x00 = 0x00A6 = 166 25 00 0 Altitude u16(LE) byte1 可能是海拔 26 6F 111 HDOP u16(LE) byte0 HDOP_raw = b26 + (b27<<8) 27 02 2 HDOP u16(LE) byte1 HDOP ≈ HDOP_raw / 400.0 HDOP bytes=6F 02 ⇒ 0x026F=623 ⇒ 623/400=1.5575 28 CA 202 Speed u16(LE) byte0 Speed_raw = b28 + (b29<<8) Speed(m/s) ≈ Speed_raw / 100.0 29 00 0 Speed u16(LE) byte1 Speed bytes=CA 00 ⇒ 202 ⇒ 2.02 m/s 30 00 0 FramBufd u16(LE) byte0 可能是FramBufd 31 00 0 FramBufd u16(LE) byte1 32 6F 111 Gyro u16(LE) byte0 Gyro = b32 + (b33<<8) 33 25 37 Gyro u16(LE) byte1 6F 25 ⇒ 0x256F=9583,正好和 Gyro=9583 对上 34 00 0 预留/计数 u16(LE) byte0(当前为0) 截图中还有很多比如StartCount和Odometer和DrSpeed和OdoPs这些都是0 二进制帧中是没有显示 是因为这种事查询/参数帧 点read才回 35 00 0 预留/计数 u16(LE) byte1 36 6C 108 状态/质量 u16(LE) byte0 电压11v-12V左右 37 19 25 状态/质量 u16(LE) byte1 Temp:温度 38 0C 12 StatusMask u16(LE) byte0 byte[38] = 0x0C byte[39] = 0x82 value = 0x0C + (0x82<<8) = 0x820C = 33292 把 0x820C 写成二进制:0x820C = 1000 0010 0000 1100b 39 82 130 StatusMask u16(LE) byte1 → 0x820C 4 个 bit 精确映射到 4 个勾选项 它只置了 4 个 bit: 0x8000(bit15) 0x0200(bit9) 0x0008(bit3) 0x0004(bit2) 这种“一个 16-bit 数里用每个 bit 当开关”的用法,就是典型的 bitmask/flag register。很多协议都会这么干:一个 16-bit 字段里塞 16 个布尔状态,未定义 bit 通常保留为 0。 idx hex dec 归属/解释 40 00 0 保留/扩展区 41 00 0 保留/扩展区 42 00 0 保留/扩展区 43 00 0 保留/扩展区 44 00 0 保留/扩展区 45 00 0 保留/扩展区 46 00 0 保留/扩展区 47 00 0 保留/扩展区 48 00 0 保留/扩展区 49 00 0 保留/扩展区 50 00 0 保留/扩展区 51 00 0 保留/扩展区 52 00 0 保留/扩展区 53 00 0 保留/扩展区 54 00 0 保留/扩展区 55 00 0 保留/扩展区 56 00 0 保留/扩展区 57 00 0 保留/扩展区 58 BB 187 Checksum (LE) byte0 59 3B 59 Checksum (LE) byte1 → 0x3BBB 2) 关键字段换算表(把多字节按 LE 组合,并给出单位/公式) 字段 offset 原始hex(按LE) 原始整数 换算公式 换算结果 Year 6..7 EA 07 0x07EA=2026 — 2026 Time(H:M:S) 8..10 06 19 0E — — 06:25:14(很像UTC) SvGPS 11 08 8 — 8 Latitude raw 12..15 FB 6E 58 02 0x02586EFB=39350011 rad = raw/1e8 0.39350011 rad Latitude 12..15 同上 同上 deg = rad*180/π 22.54589554° Longitude raw 16..19 0D 40 DA 0B 0x0BDA400D=198852621 rad = raw/1e8 1.98852621 rad Longitude 16..19 同上 同上 deg = rad*180/π 113.93415928° Course(推定) 20..21 39 18 0x1839=6201 deg = raw/100 62.01° FramPs 22..23 01 00 1 — 1 Altitude(推定) 24..25 A6 00 166 m = raw 166 m HDOP(推定) 26..27 6F 02 623 HDOP = raw/400 1.5575(≈1.56) Speed(高置信) 28..29 CA 00 202 m/s = raw/100 2.02 m/s FramBufd 30..31 00 00 0 — 0 Gyro 32..33 6F 25 0x256F=9583 — 9583 状态/质量(待定) 36..37 6C 19 0x196C=6508 — 6508 StatusMask(待定) 38..39 0C 82 0x820C=33292 bitmask 0x820C 校验和 58..59 BB 3B 0x3BBB 见下 OK 其中 Course/Altitude/HDOP 的缩放(/100、/400、m)是结合多帧数据“量级形态”推出来的;Speed、Gyro、经纬度这几个在数据里对齐度最高。

OBU和MDT私有协议数据帧 一、OBU [2026-01-21 10:07:45.968] FF FF 52 00 20 06 26 [2026-01-21 10:07:46.105] FF FF 4B 28 23 64 C6 25 [2026-01-21 10:07:46.113] 00 8C FF FF 56 2E 05 19 [2026-01-21 10:07:46.121] 00 64 2E 28 23 00 40 00 [2026-01-21 10:07:46.130] 00 00 00 33 [2026-01-21 10:07:46.458] FF FF 53 E3 20 00 00 C3 [2026-01-21 10:07:46.467] 01 27 40 2B E3 20 73 00 [2026-01-21 10:07:46.475] 00 00 00 FD 帧:FF FF 52 00 20 06 26 payload = 00 20 06 chk = 00^20^06 = 26 ✅ 这就是典型的 3-byte bitmask(24 个勾选位够用),你“bit 对应 UI 哪个勾”暂时先别钉死,等你再抓几组“勾选变化”的对照包就能反推出来。 帧:FF FF 4B 28 23 64 C6 25 00 8C 映射: Ko_raw = 0x2328 = 9000 → Ko = 9000/10 = 900 KoCount = 0x64 → 100 KPO_raw = 0x25C6 = 9670 KPOCount = 0x00 → 0 chk XOR ✅ 帧:FF FF 56 2E 05 19 00 64 2E 28 23 00 40 00 00 00 00 33 19 00 -> 25°C(当作“温度”) 因为你后面自己也用新截图验证了:UI 的 Temp 变了,但这两个字节不变。 所以它几乎可以排除是“实时温度”。 这帧看起来像“DR 标定快照 + 一些运行状态”,其中: 2E 05 作为 u16le = 0x052E = 1326 → Vcc = 1326/400 = 3.315V ✅ 2E 单字节 → Vr_raw,Vr = 2E/20 = 2.30 ✅ 28 23 → Ko_raw(再次出现)✅ 00(在 Ko 后面那个单字节)非常像 Vrev(UI 里 Vrev=0)✅ 40 很像一个 flag byte(或其中一个 flag byte)✅ 后面一串 00 基本就是保留/对齐/扩展 GPS State(3 字节 flags,高置信是“bitmask”) 完整帧:FF FF 52 00 20 06 26 idx hex 含义 0 FF 帧头 1 FF 帧头 2 52 块类型:GPS State 3 00 GPS 状态 flags[0](bitmask,当前全 0) 4 20 GPS 状态 flags[1](bitmask,bit5=1,高概率对应 UI 的 GPS 勾选) 5 06 GPS 状态 flags[2](bitmask,bit1+bit2=1,高概率对应 OPK OK、Vr OK 这类勾选) 6 26 校验:00 XOR 20 XOR 06 = 26 ✅ 这页 UI checkbox 很多,3 字节=24 个 bit 完全够装。 DrCalibration(Ko / KPO / Count,高置信) 你日志把它拆行了,但应当拼成一帧: 完整帧:FF FF 4B 28 23 64 C6 25 00 8C payload(6 字节):28 23 64 C6 25 00 idx hex 含义(小端) 0 FF 帧头 1 FF 帧头 2 4B 块类型:DrCalibration 3 28 Ko_raw 低字节 4 23 Ko_raw 高字节 → 0x2328 = 9000(UI Ko=900 很像 Ko=Ko_raw/10) 5 64 Ko Count → 0x64 = 100(UI 里 Ko 那行 Count=100) 6 C6 KPO 低字节 7 25 KPO 高字节 → 0x25C6 = 9670(UI KPO=9670) 8 00 KPO Count → 0(UI KPO 那行 Count=0) 9 8C 校验:28^23^64^C6^25^00 = 8C ✅ 完整帧:FF FF 56 2E 05 19 00 64 2E 28 23 00 40 00 00 00 33 payload(13 字节): 2E 05 19 00 64 2E 28 23 00 40 00 00 00 idx hex 目前能确定的 / 可能的含义 0 FF 帧头 1 FF 帧头 2 56 块类型:0x56(未知块,像“配置/快照”) 3 2E 2E 05 → u16le=0x052E=1326 idx3-4 作为一个 u16le:0x052E=1326 得到 1326/400=3.315,非常接近 UI 的 Vcc=3.32 4 05 *候选字段A(见下) 5 19 19 00 → u16le=0x0019=25 25°C 是非常典型的“出厂/室温标定参考点”(很多设备会用一个固定参考温度做补偿/校准参数) 6 00 7 64 很像 Count=100(与 0x4B 的 KoCount 一致) 8 2E idx8 很可能是 Vr_raw,Vr = idx8 / 20 = 2.30 9 28 Ko_raw 低字节 10 23 Ko_raw 高字节 → 0x2328=9000(与 0x4B 完全一致) idx9-10:Ko_raw(/10) 11 00 保留/小字段 12 40 flags 或配置字节(bitmask 的可能性很高) 0x40 = 0100 0000b,看起来就是“只开了某一个 bit”。把多个 checkbox 压进一个字节/整数里(每个 bit 表示一个布尔状态)叫 bitmask/flag register,这是协议/寄存器里非常常见的省空间做法 13 00 保留 14 00 保留 15 00 保留 16 33 校验:XOR(payload)=33 ✅ idx3-4 作为一个 u16le:0x052E=1326 /400 这种比例是很常见的一类“定点电压单位”:把电压用整数表示,每 1 count = 1/400 V = 0.0025 V = 2.5 mV。所以你这帧里如果 idx3-4 取 u16le = 0x052E = 1326,那么 Vcc = 1326 / 400 = 3.315 V idx5 的 0x19=25 可能是温度(25°C),也可能是某种状态码。你截图里温度=12,但那是 10:08:48 时刻;0x56 这帧是 10:07:46,上电瞬间温度/显示刷新不同步很常见。 后续的二进制帧中会每秒更新温度 OBU 基本信息/状态(ID/FW 高置信,其余部分待验证) 完整帧: FF FF 53 E3 20 00 00 C3 01 27 40 2B E3 20 73 00 00 00 00 FD payload(16 字节): E3 20 00 00 C3 01 27 40 2B E3 20 73 00 00 00 00 idx hex 含义 0 FF 帧头 1 FF 帧头 2 53 块类型:OBU State / 基本信息 3 E3 OBUID 低字节 4 20 OBUID 高字节 → 0x20E3=8419(对上 UI 的 OBUID=8419)✅ 5 00 保留/状态 6 00 保留/状态 7 C3 状态/型号/码(待验证) 8 01 FW major = 1 ✅ 9 27 FW minor = 0x27(很像 BCD 27)→ UI 显示 1.27 ✅ 10 40 flags(待验证,可能与右上 Configuration 勾选有关) 11 2B RF/频点索引(待验证;UI Freq=439MHz 很可能由“基频+索引”计算) 12 E3 MCUID 低字节 13 20 MCUID 高字节 → 0x20E3=8419(对上 UI 的 MCUID=8419)✅ 14 73 模式/状态码(你别的日志也出现过 74,像 Standby/Working 或状态切换) 15 00 预留 16 00 预留 17 00 预留 18 00 预留 19 FD 校验:XOR(payload)=FD ✅ [2026-01-21 10:07:47.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:47.019] 17 3B 3B 00 00 00 00 00 [2026-01-21 10:07:47.027] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:47.044] BD 25 00 00 62 09 00 3C [2026-01-21 10:07:47.052] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:47.069] 00 00 80 32 [2026-01-21 10:07:48.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:48.018] 17 3B 3A 00 00 00 00 00 [2026-01-21 10:07:48.026] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:48.043] 68 25 00 00 A5 0A 0C 3C [2026-01-21 10:07:48.051] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:48.068] 00 00 87 31 [2026-01-21 10:07:49.010] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:49.019] 17 3B 39 00 00 00 00 00 [2026-01-21 10:07:49.027] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.035] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:49.044] 6B 25 00 00 01 0B 0C 3C [2026-01-21 10:07:49.052] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.060] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:49.069] 00 00 29 31 [2026-01-21 10:07:50.016] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:50.024] 17 3B 38 00 00 00 00 00 [2026-01-21 10:07:50.032] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.041] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:50.049] 6A 25 00 00 2F 0B 0C 3C [2026-01-21 10:07:50.057] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.066] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:50.074] 00 00 FD 30 [2026-01-21 10:07:51.011] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:51.019] 17 3B 37 00 00 00 00 00 [2026-01-21 10:07:51.028] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.036] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:51.044] 6A 25 00 00 3F 0B 0C 3C [2026-01-21 10:07:51.053] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.061] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.070] 00 00 EE 30 [2026-01-21 10:07:51.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:51.687] 02 07 35 00 00 00 00 00 [2026-01-21 10:07:51.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:51.712] 6A 25 00 00 7C 0B 0C 3C [2026-01-21 10:07:51.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:51.737] 00 00 C8 64 [2026-01-21 10:07:52.680] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:52.688] 02 07 36 00 00 00 00 00 [2026-01-21 10:07:52.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.705] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:52.713] 6A 25 00 00 9B 0B 0C 3C [2026-01-21 10:07:52.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.730] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:52.738] 00 00 A8 64 [2026-01-21 10:07:53.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:53.687] 02 07 37 00 00 00 00 00 [2026-01-21 10:07:53.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:53.712] 6A 25 00 00 6D 0B 0C 3C [2026-01-21 10:07:53.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:53.737] 00 00 D5 64 [2026-01-21 10:07:54.839] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:54.848] 02 07 38 00 00 00 00 00 [2026-01-21 10:07:54.856] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.864] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:54.873] 6A 25 00 00 C9 0B 0C 3C [2026-01-21 10:07:54.881] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.889] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:54.898] 00 00 78 64 [2026-01-21 10:07:55.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:55.687] 02 07 39 00 00 00 00 00 [2026-01-21 10:07:55.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:55.712] 6A 25 00 00 BA 0B 0C 3C [2026-01-21 10:07:55.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:55.737] 00 00 86 64 [2026-01-21 10:07:56.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:56.687] 02 07 3A 00 00 00 00 00 [2026-01-21 10:07:56.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:56.712] 69 25 00 00 D8 0B 0C 3C [2026-01-21 10:07:56.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:56.737] 00 00 68 64 [2026-01-21 10:07:57.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:57.687] 02 07 3B 00 00 00 00 00 [2026-01-21 10:07:57.696] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:57.712] 6A 25 00 00 E8 0B 0C 3C [2026-01-21 10:07:57.721] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:57.737] 00 00 56 64 [2026-01-21 10:07:58.678] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:58.686] 02 08 00 00 00 00 00 00 [2026-01-21 10:07:58.694] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.702] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:58.711] 69 25 00 00 E8 0B 0C 3C [2026-01-21 10:07:58.719] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.728] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:58.736] 00 00 92 63 [2026-01-21 10:07:59.679] FF 81 E8 03 00 00 00 00 [2026-01-21 10:07:59.687] 02 08 01 00 00 00 00 00 [2026-01-21 10:07:59.695] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.704] 00 00 00 00 0F 27 00 00 [2026-01-21 10:07:59.712] 6A 25 00 00 C9 0B 0C 3C [2026-01-21 10:07:59.720] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.729] 00 00 00 00 00 00 00 00 [2026-01-21 10:07:59.737] 00 00 AF 63 [2026-01-21 10:08:00.780] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:00.788] 02 08 02 00 32 E9 8B FE [2026-01-21 10:08:00.797] 48 04 FC 0A 1F E3 01 00 [2026-01-21 10:08:00.805] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:00.813] 69 25 00 00 C9 0B 0C 3C [2026-01-21 10:08:00.822] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:00.830] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:00.839] 00 00 8E 89 [2026-01-21 10:08:01.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:01.707] 02 08 03 00 EC D8 8B FE [2026-01-21 10:08:01.716] 67 05 FC 0A 48 E3 01 00 [2026-01-21 10:08:01.723] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:01.732] 69 25 00 00 D8 0B 0C 3C [2026-01-21 10:08:01.740] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:01.748] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:01.757] 00 00 7C 98 [2026-01-21 10:08:02.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:02.708] 02 08 04 00 99 C8 8B FE [2026-01-21 10:08:02.716] 87 06 FC 0A 72 E3 01 00 [2026-01-21 10:08:02.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:02.733] 69 25 00 00 F7 0B 0C 3C [2026-01-21 10:08:02.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:02.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:02.758] 00 00 65 A7 [2026-01-21 10:08:03.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:03.707] 02 08 05 00 47 B8 8B FE [2026-01-21 10:08:03.716] A8 07 FC 0A 9C E3 01 00 [2026-01-21 10:08:03.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:03.732] 69 25 00 00 07 0C 0C 3C [2026-01-21 10:08:03.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:03.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:03.758] 00 00 5B B6 [2026-01-21 10:08:04.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:04.707] 02 08 06 00 F4 A7 8B FE [2026-01-21 10:08:04.716] C9 08 FC 0A C5 E3 01 00 [2026-01-21 10:08:04.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:04.734] 6A 25 00 00 F7 0B 0C 3C [2026-01-21 10:08:04.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:04.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:04.757] 00 00 72 C5 [2026-01-21 10:08:05.701] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:05.709] 02 08 07 00 8E 39 90 FE [2026-01-21 10:08:05.718] 64 D0 ED 0A 32 D8 01 00 [2026-01-21 10:08:05.727] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:05.736] 69 25 00 00 35 0C 0C 3C [2026-01-21 10:08:05.745] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:05.754] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:05.761] 00 00 9C 77 [2026-01-21 10:08:06.872] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:06.880] 02 08 08 00 EA D4 88 FE [2026-01-21 10:08:06.889] A2 B9 F9 0A FE EA 01 00 [2026-01-21 10:08:06.899] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:06.905] 6B 25 00 00 35 0C 0C 3C [2026-01-21 10:08:06.913] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:06.921] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:06.930] 00 00 2F E0 [2026-01-21 10:08:07.701] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:07.709] 02 08 09 00 C0 C3 88 FE [2026-01-21 10:08:07.719] AB BA F9 0A 2A EB 01 00 [2026-01-21 10:08:07.728] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:07.737] 69 25 00 00 35 0C 0C 3C [2026-01-21 10:08:07.743] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:07.751] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:07.760] 00 00 25 F0 [2026-01-21 10:08:08.699] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:08.708] 02 08 0A 00 96 B2 88 FE [2026-01-21 10:08:08.718] B5 BB F9 0A 56 EB 01 00 [2026-01-21 10:08:08.727] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:08.734] 6A 25 00 00 54 0C 0C 3C [2026-01-21 10:08:08.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:08.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:08.758] 00 00 F8 FF [2026-01-21 10:08:09.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:09.707] 02 08 0B 00 6C A1 88 FE [2026-01-21 10:08:09.717] BF BC F9 0A 82 EB 01 00 [2026-01-21 10:08:09.726] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:09.735] 68 25 00 00 54 0C 0C 3C [2026-01-21 10:08:09.744] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:09.751] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:09.757] 00 00 ED 0F [2026-01-21 10:08:10.698] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:10.707] 02 08 0C 00 42 90 88 FE [2026-01-21 10:08:10.717] C9 BD F9 0A AE EB 01 00 [2026-01-21 10:08:10.726] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:10.735] 69 25 00 00 44 0C 0C 3C [2026-01-21 10:08:10.741] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:10.749] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:10.757] 00 00 EF 1F [2026-01-21 10:08:11.697] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:11.705] 02 08 0D 00 19 7F 88 FE [2026-01-21 10:08:11.715] D4 BE F9 0A DA EB 01 00 [2026-01-21 10:08:11.724] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:11.732] 69 25 00 00 54 0C 0C 3C [2026-01-21 10:08:11.739] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:11.747] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:11.755] 00 00 D0 2F [2026-01-21 10:08:12.871] FF 81 E8 03 00 00 00 00 [2026-01-21 10:08:12.879] 02 08 0E 00 F0 6D 88 FE [2026-01-21 10:08:12.888] DF BF F9 0A 06 EC 01 00 [2026-01-21 10:08:12.897] 00 00 00 00 0F 27 00 00 [2026-01-21 10:08:12.904] 69 25 00 00 44 0C 0C 3C [2026-01-21 10:08:12.913] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:12.921] 00 00 00 00 00 00 00 00 [2026-01-21 10:08:12.930] 00 00 D1 3F 之前点图中read 发送给OBU的 [2026-01-19 10:05:10.884] FF 89 04 56 56 [2026-01-19 10:05:10.961] FF 89 04 4B 4B [2026-01-19 10:05:11.164] FF 89 04 53 053 字节序号 值 含义 0 FF 帧头 1 89 “Host→OBU 命令”标识(跟 OBU→Host 的 FF FF 不同) 2 04 很像长度/固定功能码(至少在 Read 里固定为 04) 3 xx 命令码:56/4B/53 4 xx 校验(此处=命令码本身) 这个命令的校验很“偷懒”:最后 1 字节直接等于 cmd,所以你看到 56 56 / 4B 4B / 53 53。 OBU回的 [2026-01-19 10:21:37.452] FF FF 56 2E 05 19 00 64 [2026-01-19 10:21:37.461] 2E 28 23 00 40 00 00 00 [2026-01-19 10:21:37.468] 00 33 [2026-01-19 10:21:37.968] FF FF 53 E3 20 00 00 C3 [2026-01-19 10:21:37.976] 01 27 40 2B E3 20 73 00 [2026-01-19 10:21:37.987] 00 00 00 FD [2026-01-19 10:21:38.967] FF FF 4B 28 23 64 C6 25 [2026-01-19 10:21:38.974] 00 8C 点图中read 发送给OBU的 [2026-01-19 10:17:03.590] FF 89 04 53 53 [2026-01-19 10:17:04.591] 24 40 43 3F 0D 0A OBU回的 [2026-01-15 13:39:32.955] FF FF 53 E3 20 00 00 C3 [2026-01-15 13:39:32.964] 01 27 40 2B E3 20 73 00 [2026-01-15 13:39:32.974] 00 00 00 FD [2026-01-15 13:39:35.955] FF FF 53 E3 20 00 00 C3 [2026-01-15 13:39:35.964] 01 27 40 2B E3 20 74 00 [2026-01-15 13:39:35.974] 00 00 00 FA [2026-01-13 14:25:14.972] FF 81 E8 03 0D 01 EA 07 [2026-01-13 14:25:14.980] 06 19 0E 08 FB 6E 58 02 [2026-01-13 14:25:14.992] 0D 40 DA 0B 39 18 01 00 [2026-01-13 14:25:15.000] A6 00 6F 02 CA 00 00 00 [2026-01-13 14:25:15.007] 6F 25 00 00 6C 19 0C 82 [2026-01-13 14:25:15.020] 00 00 00 00 00 00 00 00 [2026-01-13 14:25:15.025] 00 00 00 00 00 00 00 00 [2026-01-13 14:25:15.034] 00 00 BB 3B idx hex dec 归属/解释 0 FF 255 帧头/同步(固定) 1 81 129 帧头/同步(固定) 2 E8 232 帧类型/常量(和所有帧一致) 3 03 3 帧类型/常量(和所有帧一致) 4 0D 13 Day(日=13) 5 01 1 Month(月=1) 6 EA 234 Year (LE) 低字节 7 07 7 Year (LE) 高字节 → 0x07EA=2026 公式:value=7×256+234=2026 8 06 6 Hour(很可能是 UTC 小时) 9 19 25 Minute(25) 10 0E 14 Second(14,后续每帧+1)秒 11 08 8 SvGPS(GPS卫星数=8) 12 FB 251 Latitude u32(LE) byte0 纬度(Latitude)和经度(Longitude) 使用弧度 × 1e8 来存经纬度然后转成度 13 6E 110 Latitude u32(LE) byte1 字节:FB 6E 58 02(byte0→byte3) 小端拼成 32 位数: Hex:0x02586EFB Dec:39,350,011 14 58 88 Latitude u32(LE) byte2 纬度弧度:39,350,011 / 1e8 = 0.39350011 rad 15 02 2 Latitude u32(LE) byte3 转成度:deg = rad × 180 / π 0.39350011 × 180/π ≈ 22.54589554° Latitude ≈ 22.545896° 16 0D 13 Longitude u32(LE) byte0 字节:0D 40 DA 0B Hex:0x0BDA400D Dec:198,852,621 17 40 64 Longitude u32(LE) byte1 经度弧度:198,852,621 / 1e8 = 1.98852621 rad 18 DA 218 Longitude u32(LE) byte2 度:1.98852621 × 180/π ≈ 113.93415928° 19 0B 11 Longitude u32(LE) byte3 Longitude ≈ 113.934159° idx hex dec 归属/解释 20 39 57 Course int16 LE byte0 Course:byte0=0x39,byte1=0x18 = 0x1839 = 24×256 + 57 = 6201 21 18 24 Course int16 LE byte1 Course ≈ 6201 / 100 = 62.01° 22 01 1 FramPs u16(LE) byte0 FramPs:0x01 0x00 = 0x0001 = 1 23 00 0 FramPs u16(LE) byte1 FramPs = b22 + (b23<<8) 这里基本是 1,后面变成 0(看起来像某个状态/计数开关),通常直接显示 raw。 24 A6 166 Altitude u16(LE) byte0 Altitude:0xA6 0x00 = 0x00A6 = 166 25 00 0 Altitude u16(LE) byte1 可能是海拔 26 6F 111 HDOP u16(LE) byte0 HDOP_raw = b26 + (b27<<8) 27 02 2 HDOP u16(LE) byte1 HDOP ≈ HDOP_raw / 400.0 HDOP bytes=6F 02 ⇒ 0x026F=623 ⇒ 623/400=1.5575 28 CA 202 Speed u16(LE) byte0 Speed_raw = b28 + (b29<<8) Speed(m/s) ≈ Speed_raw / 100.0 29 00 0 Speed u16(LE) byte1 Speed bytes=CA 00 ⇒ 202 ⇒ 2.02 m/s 30 00 0 FramBufd u16(LE) byte0 可能是FramBufd 31 00 0 FramBufd u16(LE) byte1 32 6F 111 Gyro u16(LE) byte0 Gyro = b32 + (b33<<8) 33 25 37 Gyro u16(LE) byte1 6F 25 ⇒ 0x256F=9583,正好和 Gyro=9583 对上 34 00 0 预留/计数 u16(LE) byte0(当前为0) 截图中还有很多比如StartCount和Odometer和DrSpeed和OdoPs这些都是0 二进制帧中是没有显示 是因为这种事查询/参数帧 点read才回 35 00 0 预留/计数 u16(LE) byte1 36 6C 108 状态/质量 u16(LE) byte0 电压11v-12V左右 37 19 25 状态/质量 u16(LE) byte1 Temp:温度 38 0C 12 StatusMask u16(LE) byte0 byte[38] = 0x0C byte[39] = 0x82 value = 0x0C + (0x82<<8) = 0x820C = 33292 把 0x820C 写成二进制:0x820C = 1000 0010 0000 1100b 39 82 130 StatusMask u16(LE) byte1 → 0x820C 4 个 bit 精确映射到 4 个勾选项 它只置了 4 个 bit: 0x8000(bit15) 0x0200(bit9) 0x0008(bit3) 0x0004(bit2) 这种“一个 16-bit 数里用每个 bit 当开关”的用法,就是典型的 bitmask/flag register。很多协议都会这么干:一个 16-bit 字段里塞 16 个布尔状态,未定义 bit 通常保留为 0。 idx hex dec 归属/解释 40 00 0 保留/扩展区 41 00 0 保留/扩展区 42 00 0 保留/扩展区 43 00 0 保留/扩展区 44 00 0 保留/扩展区 45 00 0 保留/扩展区 46 00 0 保留/扩展区 47 00 0 保留/扩展区 48 00 0 保留/扩展区 49 00 0 保留/扩展区 50 00 0 保留/扩展区 51 00 0 保留/扩展区 52 00 0 保留/扩展区 53 00 0 保留/扩展区 54 00 0 保留/扩展区 55 00 0 保留/扩展区 56 00 0 保留/扩展区 57 00 0 保留/扩展区 58 BB 187 Checksum (LE) byte0 59 3B 59 Checksum (LE) byte1 → 0x3BBB 2) 关键字段换算表(把多字节按 LE 组合,并给出单位/公式) 字段 offset 原始hex(按LE) 原始整数 换算公式 换算结果 Year 6..7 EA 07 0x07EA=2026 — 2026 Time(H:M:S) 8..10 06 19 0E — — 06:25:14(很像UTC) SvGPS 11 08 8 — 8 Latitude raw 12..15 FB 6E 58 02 0x02586EFB=39350011 rad = raw/1e8 0.39350011 rad Latitude 12..15 同上 同上 deg = rad*180/π 22.54589554° Longitude raw 16..19 0D 40 DA 0B 0x0BDA400D=198852621 rad = raw/1e8 1.98852621 rad Longitude 16..19 同上 同上 deg = rad*180/π 113.93415928° Course(推定) 20..21 39 18 0x1839=6201 deg = raw/100 62.01° FramPs 22..23 01 00 1 — 1 Altitude(推定) 24..25 A6 00 166 m = raw 166 m HDOP(推定) 26..27 6F 02 623 HDOP = raw/400 1.5575(≈1.56) Speed(高置信) 28..29 CA 00 202 m/s = raw/100 2.02 m/s FramBufd 30..31 00 00 0 — 0 Gyro 32..33 6F 25 0x256F=9583 — 9583 状态/质量(待定) 36..37 6C 19 0x196C=6508 — 6508 StatusMask(待定) 38..39 0C 82 0x820C=33292 bitmask 0x820C 校验和 58..59 BB 3B 0x3BBB 见下 OK 其中 Course/Altitude/HDOP 的缩放(/100、/400、m)是结合多帧数据“量级形态”推出来的;Speed、Gyro、经纬度这几个在数据里对齐度最高。 -

istio istio是什么?Istio 是一种开源服务网格,可透明地分层到现有的分布式应用程序上。 Istio 的强大功能提供了一种统一且更高效的方式来保护、连接和监控服务。 Istio 是实现负载均衡、服务到服务身份验证和监控的途径 - 几乎无需更改服务代码。 功能: 使用双向 TLS 加密、强大的基于身份的身份验证和鉴权在集群中保护服务到服务通信 HTTP、gRPC、WebSocket 和 TCP 流量的自动负载均衡 使用丰富的路由规则、重试、故障转移和故障注入对流量行为进行细粒度控制 支持访问控制、限流和配额的可插入策略层和配置 API 集群内所有流量(包括集群入口和出口)的自动指标、日志和链路追踪 如何工作? Istio 使用代理来拦截您的所有网络流量,从而根据您设置的配置允许使用一系列应用程序感知功能。 控制平面采用您所需的配置及其对服务的视图,并动态地编程代理服务器,并根据规则或环境的变化对其进行更新。 数据平面是服务之间的通信。如果没有服务网格,网络就无法理解正在发送的流量,也无法根据流量类型、流量来源或目的地做出任何决策。 Istio 支持两种数据平面模式: Sidecar 模式,它会与您在集群中启动的每个 Pod 一起部署一个 Envoy 代理,或者与在虚拟机上运行的服务一同运行。(下面使用的了sidecar模式) Ambient 模式,它使用每个节点的四层代理,并且可选地使用每个命名空间的 Envoy 代理来实现七层功能。 一、部署#官网 https://istio.io/#下载 https://github.com/istio/istio/releases/download/1.28.2/istio-1.28.2-linux-amd64.tar.gz #解压 tar -zxvf istio-1.28.2-linux-amd64.tar.gz #部署 root@k8s-01:/woke/istio# ls istio-1.28.2 istio-1.28.2-linux-amd64.tar.gz root@k8s-01:/woke/istio# cd istio-1.28.2/ root@k8s-01:/woke/istio/istio-1.28.2# export PATH=$PWD/bin:$PATH root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -f samples/bookinfo/demo-profile-no-gateways.yaml -y |\ | \ | \ | \ /|| \ / || \ / || \ / || \ / || \ / || \ /______||__________\ ____________________ \__ _____/ \_____/ ✔ Istio core installed ⛵️ Processing resources for Istiod. Waiting for Deployment/istio-system/istiod ✔ Istiod installed 🧠 ✔ Installation complete root@k8s-01:/woke/istio/istio-1.28.2# kubectl label namespace default istio-injection=enabled namespace/default labeled root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd gateways.gateway.networking.k8s.io NAME CREATED AT gateways.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd httproutes.gateway.networking.k8s.io NAME CREATED AT httproutes.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd gatewayclasses.gateway.networking.k8s.io NAME CREATED AT gatewayclasses.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd | egrep 'tlsroutes|tcproutes|udproutes|grpcroutes\.gateway\.networking\.k8s\.io' grpcroutes.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl exec "$(kubectl get pod -l app=ratings -o jsonpath='{.items[0].metadata.name}')" -c ratings -- curl -sS productpage:9080/productpage | grep -o "<title>.*</title>" <title>Simple Bookstore App</title> root@k8s-01:/woke/istio/istio-1.28.2# kubectl apply -f samples/bookinfo/networking/bookinfo-gateway.yaml gateway.networking.istio.io/bookinfo-gateway created virtualservice.networking.istio.io/bookinfo created root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateway NAME CLASS ADDRESS PROGRAMMED AGE traefik-gw traefik True 25d root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateway NAME CLASS ADDRESS PROGRAMMED AGE traefik-gw traefik True 25d root@k8s-01:/woke/istio/istio-1.28.2# kubectl get pod -A | grep gateway root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateways.networking.istio.io -A kubectl get virtualservices.networking.istio.io -A NAMESPACE NAME AGE default bookinfo-gateway 2m55s NAMESPACE NAME GATEWAYS HOSTS AGE default bookinfo ["bookinfo-gateway"] ["*"] 2m55s root@k8s-01:/woke/istio/istio-1.28.2# kubectl get svc -n istio-system | egrep 'ingress|gateway' kubectl get pods -n istio-system | egrep 'ingress|gateway' root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -y --set profile=demo istioctl: command not found root@k8s-01:/woke/istio/istio-1.28.2# ls bin LICENSE manifests manifest.yaml README.md samples tools root@k8s-01:/woke/istio/istio-1.28.2# export PATH=$PWD/bin:$PATH istioctl version client version: 1.28.2 control plane version: 1.28.2 data plane version: 1.28.2 (6 proxies) root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -y --set profile=demo |\ | \ | \ | \ /|| \ / || \ / || \ / || \ / || \ / || \ /______||__________\ ____________________ \__ _____/ \_____/ ✔ Istio core installed ⛵️ ✔ Istiod installed 🧠 ✔ Egress gateways installed 🛫 ✔ Ingress gateways installed 🛬 ✔ Installation complete root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get pods -n istio-system | egrep 'istio-ingressgateway|istiod' kubectl get svc -n istio-system | egrep 'istio-ingressgateway' istio-ingressgateway-796f5cf647-n28c8 1/1 Running 0 107s istiod-5c84f8c79d-q7p2x 1/1 Running 0 91m istio-ingressgateway LoadBalancer 10.99.189.246 <pending> 15021:31689/TCP,80:32241/TCP,443:30394/TCP,31400:31664/TCP,15443:32466/TCP 107s #调通 root@k8s-01:/woke/istio/istio-1.28.2# kubectl port-forward -n istio-system svc/istio-ingressgateway 8080:80 Forwarding from 127.0.0.1:8080 -> 8080 Forwarding from [::1]:8080 -> 8080 Handling connection for 8080 #访问 root@k8s-01:/woke/istio/istio-1.28.2# curl -sS http://127.0.0.1:8080/productpage | grep -o "<title>.*</title>" <title>Simple Bookstore App</title> 1)更细的发布策略(不止权重灰度) 基于请求特征的灰度(更精准) 按 Header(用户/设备/地区/灰度标记) 按 Cookie(特定用户群) 按 URI(某些路径走新版本) 例子:带 x-canary: true 的请求走 v2,其余走 v1。 镜像流量(Shadow / Mirroring) 真实流量仍走 v1 同时“复制一份”给 v2 做压测/验证(不影响线上返回) 适合:验证新版本行为、性能。 2)限流与保护(Resilience) 超时 / 重试 / 连接池 timeout: 2s retries: attempts: 3 连接池限制(避免下游被打爆) 熔断 / 异常剔除(Outlier Detection) 连续 5xx 多就把某个 pod/实例踢出一段时间 故障注入(Chaos Testing) 人为注入延迟/错误(只对某类请求),验证系统韧性 3)流量控制与安全 mTLS 加密(服务到服务) 自动给网格内东西向流量加密 可做 STRICT/ PERMISSIVE 模式 配 PeerAuthentication / DestinationRule 细粒度访问控制(RBAC) 谁可以访问谁、什么路径、什么方法 支持基于 JWT claim、source principal、namespace、ip 等条件 用 AuthorizationPolicy JWT/OIDC 校验(API 鉴权) 在网关或服务侧校验 token(不必应用自己写) 用 RequestAuthentication + AuthorizationPolicy 4)入口网关能力(North-South) 多域名/多证书(HTTPS/TLS) Gateway 配多 SNI、证书、重定向 HTTP→HTTPS 路径重写/跳转/多服务路由 /api 去 A 服务、/web 去 B 服务 rewrite /v1 → / 等 速率限制(通常需配合 EnvoyFilter 或外部组件) 入口处做全局/按 key(IP、用户)限流 (Istio 原生 CRD 的“全局限流”需要看你用的扩展方案,常见是 Envoy ext-authz / ratelimit service) 5)可观测性(你在 Kiali/Prometheus 上看到的背后能力) 指标:QPS、P99、错误率、TCP 指标 分布式追踪:Jaeger/Zipkin/Tempo(需要装 tracing) 访问日志:Envoy access log(可输出到 stdout 或日志系统) 拓扑:Kiali 图里展示服务调用关系 6)多集群/多网格(更高级) 多集群同网格 跨集群服务发现与 mTLS East-West Gateway 给你一个“从 Bookinfo 出发最值得尝试”的 6 个玩法(上手快) Header 灰度:指定用户走 reviews v3 流量镜像:把部分流量镜像到 v3(不影响返回) 超时+重试:让 productpage 调 reviews 更稳定 熔断:reviews 出现错误时自动隔离坏实例 故障注入:只对某路径注入 2s 延迟测试体验 mTLS STRICT + 授权策略:禁止网格外/未授权服务访问 reviews

istio istio是什么?Istio 是一种开源服务网格,可透明地分层到现有的分布式应用程序上。 Istio 的强大功能提供了一种统一且更高效的方式来保护、连接和监控服务。 Istio 是实现负载均衡、服务到服务身份验证和监控的途径 - 几乎无需更改服务代码。 功能: 使用双向 TLS 加密、强大的基于身份的身份验证和鉴权在集群中保护服务到服务通信 HTTP、gRPC、WebSocket 和 TCP 流量的自动负载均衡 使用丰富的路由规则、重试、故障转移和故障注入对流量行为进行细粒度控制 支持访问控制、限流和配额的可插入策略层和配置 API 集群内所有流量(包括集群入口和出口)的自动指标、日志和链路追踪 如何工作? Istio 使用代理来拦截您的所有网络流量,从而根据您设置的配置允许使用一系列应用程序感知功能。 控制平面采用您所需的配置及其对服务的视图,并动态地编程代理服务器,并根据规则或环境的变化对其进行更新。 数据平面是服务之间的通信。如果没有服务网格,网络就无法理解正在发送的流量,也无法根据流量类型、流量来源或目的地做出任何决策。 Istio 支持两种数据平面模式: Sidecar 模式,它会与您在集群中启动的每个 Pod 一起部署一个 Envoy 代理,或者与在虚拟机上运行的服务一同运行。(下面使用的了sidecar模式) Ambient 模式,它使用每个节点的四层代理,并且可选地使用每个命名空间的 Envoy 代理来实现七层功能。 一、部署#官网 https://istio.io/#下载 https://github.com/istio/istio/releases/download/1.28.2/istio-1.28.2-linux-amd64.tar.gz #解压 tar -zxvf istio-1.28.2-linux-amd64.tar.gz #部署 root@k8s-01:/woke/istio# ls istio-1.28.2 istio-1.28.2-linux-amd64.tar.gz root@k8s-01:/woke/istio# cd istio-1.28.2/ root@k8s-01:/woke/istio/istio-1.28.2# export PATH=$PWD/bin:$PATH root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -f samples/bookinfo/demo-profile-no-gateways.yaml -y |\ | \ | \ | \ /|| \ / || \ / || \ / || \ / || \ / || \ /______||__________\ ____________________ \__ _____/ \_____/ ✔ Istio core installed ⛵️ Processing resources for Istiod. Waiting for Deployment/istio-system/istiod ✔ Istiod installed 🧠 ✔ Installation complete root@k8s-01:/woke/istio/istio-1.28.2# kubectl label namespace default istio-injection=enabled namespace/default labeled root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd gateways.gateway.networking.k8s.io NAME CREATED AT gateways.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd httproutes.gateway.networking.k8s.io NAME CREATED AT httproutes.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd gatewayclasses.gateway.networking.k8s.io NAME CREATED AT gatewayclasses.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get crd | egrep 'tlsroutes|tcproutes|udproutes|grpcroutes\.gateway\.networking\.k8s\.io' grpcroutes.gateway.networking.k8s.io 2025-11-17T15:05:26Z root@k8s-01:/woke/istio/istio-1.28.2# kubectl exec "$(kubectl get pod -l app=ratings -o jsonpath='{.items[0].metadata.name}')" -c ratings -- curl -sS productpage:9080/productpage | grep -o "<title>.*</title>" <title>Simple Bookstore App</title> root@k8s-01:/woke/istio/istio-1.28.2# kubectl apply -f samples/bookinfo/networking/bookinfo-gateway.yaml gateway.networking.istio.io/bookinfo-gateway created virtualservice.networking.istio.io/bookinfo created root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateway NAME CLASS ADDRESS PROGRAMMED AGE traefik-gw traefik True 25d root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateway NAME CLASS ADDRESS PROGRAMMED AGE traefik-gw traefik True 25d root@k8s-01:/woke/istio/istio-1.28.2# kubectl get pod -A | grep gateway root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get gateways.networking.istio.io -A kubectl get virtualservices.networking.istio.io -A NAMESPACE NAME AGE default bookinfo-gateway 2m55s NAMESPACE NAME GATEWAYS HOSTS AGE default bookinfo ["bookinfo-gateway"] ["*"] 2m55s root@k8s-01:/woke/istio/istio-1.28.2# kubectl get svc -n istio-system | egrep 'ingress|gateway' kubectl get pods -n istio-system | egrep 'ingress|gateway' root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -y --set profile=demo istioctl: command not found root@k8s-01:/woke/istio/istio-1.28.2# ls bin LICENSE manifests manifest.yaml README.md samples tools root@k8s-01:/woke/istio/istio-1.28.2# export PATH=$PWD/bin:$PATH istioctl version client version: 1.28.2 control plane version: 1.28.2 data plane version: 1.28.2 (6 proxies) root@k8s-01:/woke/istio/istio-1.28.2# istioctl install -y --set profile=demo |\ | \ | \ | \ /|| \ / || \ / || \ / || \ / || \ / || \ /______||__________\ ____________________ \__ _____/ \_____/ ✔ Istio core installed ⛵️ ✔ Istiod installed 🧠 ✔ Egress gateways installed 🛫 ✔ Ingress gateways installed 🛬 ✔ Installation complete root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# root@k8s-01:/woke/istio/istio-1.28.2# kubectl get pods -n istio-system | egrep 'istio-ingressgateway|istiod' kubectl get svc -n istio-system | egrep 'istio-ingressgateway' istio-ingressgateway-796f5cf647-n28c8 1/1 Running 0 107s istiod-5c84f8c79d-q7p2x 1/1 Running 0 91m istio-ingressgateway LoadBalancer 10.99.189.246 <pending> 15021:31689/TCP,80:32241/TCP,443:30394/TCP,31400:31664/TCP,15443:32466/TCP 107s #调通 root@k8s-01:/woke/istio/istio-1.28.2# kubectl port-forward -n istio-system svc/istio-ingressgateway 8080:80 Forwarding from 127.0.0.1:8080 -> 8080 Forwarding from [::1]:8080 -> 8080 Handling connection for 8080 #访问 root@k8s-01:/woke/istio/istio-1.28.2# curl -sS http://127.0.0.1:8080/productpage | grep -o "<title>.*</title>" <title>Simple Bookstore App</title> 1)更细的发布策略(不止权重灰度) 基于请求特征的灰度(更精准) 按 Header(用户/设备/地区/灰度标记) 按 Cookie(特定用户群) 按 URI(某些路径走新版本) 例子:带 x-canary: true 的请求走 v2,其余走 v1。 镜像流量(Shadow / Mirroring) 真实流量仍走 v1 同时“复制一份”给 v2 做压测/验证(不影响线上返回) 适合:验证新版本行为、性能。 2)限流与保护(Resilience) 超时 / 重试 / 连接池 timeout: 2s retries: attempts: 3 连接池限制(避免下游被打爆) 熔断 / 异常剔除(Outlier Detection) 连续 5xx 多就把某个 pod/实例踢出一段时间 故障注入(Chaos Testing) 人为注入延迟/错误(只对某类请求),验证系统韧性 3)流量控制与安全 mTLS 加密(服务到服务) 自动给网格内东西向流量加密 可做 STRICT/ PERMISSIVE 模式 配 PeerAuthentication / DestinationRule 细粒度访问控制(RBAC) 谁可以访问谁、什么路径、什么方法 支持基于 JWT claim、source principal、namespace、ip 等条件 用 AuthorizationPolicy JWT/OIDC 校验(API 鉴权) 在网关或服务侧校验 token(不必应用自己写) 用 RequestAuthentication + AuthorizationPolicy 4)入口网关能力(North-South) 多域名/多证书(HTTPS/TLS) Gateway 配多 SNI、证书、重定向 HTTP→HTTPS 路径重写/跳转/多服务路由 /api 去 A 服务、/web 去 B 服务 rewrite /v1 → / 等 速率限制(通常需配合 EnvoyFilter 或外部组件) 入口处做全局/按 key(IP、用户)限流 (Istio 原生 CRD 的“全局限流”需要看你用的扩展方案,常见是 Envoy ext-authz / ratelimit service) 5)可观测性(你在 Kiali/Prometheus 上看到的背后能力) 指标:QPS、P99、错误率、TCP 指标 分布式追踪:Jaeger/Zipkin/Tempo(需要装 tracing) 访问日志:Envoy access log(可输出到 stdout 或日志系统) 拓扑:Kiali 图里展示服务调用关系 6)多集群/多网格(更高级) 多集群同网格 跨集群服务发现与 mTLS East-West Gateway 给你一个“从 Bookinfo 出发最值得尝试”的 6 个玩法(上手快) Header 灰度:指定用户走 reviews v3 流量镜像:把部分流量镜像到 v3(不影响返回) 超时+重试:让 productpage 调 reviews 更稳定 熔断:reviews 出现错误时自动隔离坏实例 故障注入:只对某路径注入 2s 延迟测试体验 mTLS STRICT + 授权策略:禁止网格外/未授权服务访问 reviews -

问题 调用 grafana 接口失败 error trying to reach service: dial tcp 10.244.2.60:3000: i/o timeout① 背景(30 秒) “有一次我们在 Kubernetes 集群里部署监控体系(Prometheus + Grafana),Grafana Pod 本身是 Running 的,但从节点或者其他组件访问 Grafana 的 Pod IP 的时候一直超时,curl 会直接 i/o timeout,影响监控平台的接入和联调。” “表面看 Grafana 是正常的,但网络层面存在异常。”② 问题拆解与排查思路(1 分钟) “我当时按分层排查的思路来做,而不是直接改网络策略。” 1️⃣ 先排除应用问题 我先 kubectl exec 进 Grafana Pod,直接 curl 127.0.0.1:3000/api/health 返回 200,说明 Grafana 本身没问题,端口监听正常 👉 这一步可以明确:不是应用、不是容器启动问题。 2️⃣ 再定位是 Service 层还是 Pod 网络问题 我确认 Service / Endpoint 都指向正确的 Pod IP 但从节点直接 curl PodIP 仍然超时 在同节点起一个临时 curl Pod 访问 PodIP,也同样超时 👉 这一步可以确定: 不是宿主机路由问题,而是 Pod 网络路径被拦截。 3️⃣ 确认 CNI 和隧道是否正常 集群使用的是 Cilium + VXLAN 我抓包看到 8472/UDP 的 VXLAN 流量是存在的 说明 overlay 隧道本身工作正常,否则整个集群通信都会异常 👉 到这里,网络连通性问题基本被排除。③ 关键突破点(40 秒) “真正的突破点是在 Cilium 的可观测工具 上。” 我在节点上执行了: cilium monitor --type drop 发现每一次访问 Grafana 3000 端口的 TCP SYN 都被丢弃 丢包原因非常明确:Policy denied 源身份显示为 remote-node 或 host 👉 这一步明确告诉我: 不是网络问题,而是被 Cilium 的策略明确拒绝了。④ 根因分析(30 秒) “回头检查发现,Grafana 所在命名空间里存在默认的 NetworkPolicy,只允许 Prometheus Pod 访问 Grafana 的 3000 端口。” 节点 / 宿主机访问 Grafana 时: 在 Cilium 里会被标记为 host 或 remote-node 并不属于普通 Pod identity 所以即使我用 Kubernetes NetworkPolicy 加了 ipBlock,依然无法命中这类流量 👉 本质原因是: Cilium 是以 identity/entity 为核心做策略决策,而不是单纯基于 IP。⑤ 解决方案(30 秒) “最终我使用的是 CiliumNetworkPolicy,显式放行来自节点的流量。” 在策略里允许: fromEntities: host fromEntities: remote-node 只放行到 Grafana 的 3000/TCP 应用策略后,从节点 curl PodIP 立刻恢复正常。⑥ 总结与价值(20 秒) “这个问题让我印象很深的一点是: 在使用 Cilium 这类 eBPF CNI 时,排查网络问题一定要用它自己的观测工具,而不是只停留在 Kubernetes NetworkPolicy 的抽象层。” “后续我也把这个问题整理成了 SOP,明确: Pod→Pod Node→Pod Service→Pod 在 Cilium 下对应的 identity 和策略差异,避免再踩坑。”面试官常见追问 & 标准答法(加分项) ❓追问 1:为什么 ipBlock 不生效? 答: “因为在 Cilium 里,Node / Host 流量优先按 identity(host / remote-node)匹配策略,而不是单纯按源 IP。 NetworkPolicy 的 ipBlock 在这类流量场景下不会命中,所以需要用 CiliumNetworkPolicy 的 fromEntities。” ❓追问 2:为什么不用 NodePort / LoadBalancer? 答: “NodePort 确实能绕开 Pod 网络策略,但会引入额外暴露面,不符合最小权限原则。 这里的目标只是节点内部访问 Pod 服务,用 CNI 级别策略精确放行更安全、更可控。” ❓追问 3:如何避免以后再发生? 答: 明确区分 Pod 流量 vs Node/Host 流量 在启用 Cilium 的集群里: 默认 NetworkPolicy + 例外的 CiliumNetworkPolicy 遇到网络问题第一时间用 cilium monitor / hubble observe 定位丢包原因,而不是盲改规则 🧠 面试官听到的“隐藏信号” 你这段回答会传递出几个非常强的信号: 你理解 CNI 底层实现,不只是会写 YAML 你会用 可观测工具定位问题 你做的是 体系化排障,而不是拍脑袋改配置 你有 复盘和沉淀能力 如果你愿意,下一步我还能帮你: 把这个案例改成 简历上的一条高质量项目经历 或模拟一次面试官“深挖追问”的对话,帮你把回答打磨到非常顺 你这个案例,放在 K8s 运维 / 平台工程 / SRE 面试里,是妥妥的加分项。root@k8s-01:~# cat > /tmp/cnp-allow-grafana-3000-from-nodes.yaml <<'EOF' apiVersion: cilium.io/v2 kind: CiliumNetworkPolicy metadata: name: allow-grafana-3000-from-nodes namespace: kuboard spec: endpointSelector: matchLabels: app.kubernetes.io/name: grafana ingress: - fromEntities: - host - remote-node toPorts: - ports: - port: "3000" protocol: TCP EOF kubectl apply -f /tmp/cnp-allow-grafana-3000-from-nodes.yaml kubectl -n kuboard get cnp | grep allow-grafana-3000-from-nodes ciliumnetworkpolicy.cilium.io/allow-grafana-3000-from-nodes created allow-grafana-3000-from-nodes 0s True root@k8s-01:~# curl -sS -m 3 http://10.244.2.60:3000/api/health { "database": "ok", "version": "11.2.0", "commit": "2a88694fd3ced0335bf3726cc5d0adc2d1858855" }root@k8s-01:~# 可以了 你现在是一个资深的k8s运维工程师 你帮我详细复盘分析之前的上下文 然后总结根因是什么?怎么解决的?为什么会出现这种情况 然后我想把这个问题也在面试上比如面试官问我 工作当中遇到了什么问题 可以说这个 我是怎么遇到这个问题的 过程尝试了用什么方法解决 然后最后怎么找到问题的 怎么解决的 下面我按“资深 K8s 运维复盘”的方式,把你这次 Grafana Pod IP 不通的问题完整复盘一遍:现象 → 排查路径 → 关键证据 → 根因 → 解决方案 → 为什么会这样。最后再给你一段面试可直接复述的 STAR/故事版本。 1)现象是什么 Pod 里 Grafana 本身是好的: kubectl exec grafana -- curl 127.0.0.1:3000/api/health 返回 OK 日志正常、监听正常(Grafana 启动成功) 但是从 宿主机 / 节点 访问 Pod IP 超时: curl http://10.244.2.60:3000/api/health → i/o timeout 从 同节点的测试 Pod 访问 Pod IP 也超时: 你在 k8s-01 强制调度了 curlimages/curl 去 curl PodIP,仍然 timeout 这说明:不是“宿主机路由不通”,而是“到 Pod 的流量在某处被拦截/丢弃” 2)你做了哪些正确的排查动作(过程复盘) A. 先确认应用是否健康(排除应用问题) 你先在 grafana 容器内 curl 自己: 容器内 OK → Grafana 服务与端口没问题 这一步很关键:把“应用/容器问题”先排掉。 B. 再验证网络路径(区分 host、pod、service) kubectl get svc/ep 确认 endpoint 就是 10.244.2.60:3000 curl PodIP 超时,说明不是 Service 负载均衡层的问题,而是到 endpoint 的流量被拦截 C. 检查 CNI 模式与隧道 你确认了 Cilium 配置: routing-mode: tunnel tunnel-protocol: vxlan 并且抓包能看到 8472/UDP 的 VXLAN 流量 进出(说明隧道本身在工作) 这一点也非常关键:overlay 通道是通的,否则集群内大量 Pod 通信都会异常。 D. 最关键的一步:用 Cilium 观测“丢包原因” 你执行: cilium monitor --type drop 立刻得到核心证据: xx drop (Policy denied) ... identity 6820->29952: 10.244.0.206 -> 10.244.2.60:3000 tcp SYN 以及后面: xx drop (Policy denied) ... identity remote-node->29952: 10.244.0.18 -> 10.244.2.60:3000 tcp SYN 这两条信息极其重要,等价于告诉你: 不是路由问题 不是端口没监听 是 Cilium 的策略(Policy)明确拒绝了 SYN 到这一步,根因已经锁定在 NetworkPolicy / CiliumPolicy 方向。 3)根因是什么(总结一句话版) Grafana 所在命名空间存在默认更严格的 NetworkPolicy(只允许 Prometheus Pod 访问 Grafana:3000),而节点/宿主机流量在 Cilium 里被标记成 remote-node/host 身份,不会被普通 K8s NetworkPolicy 的 ipBlock 命中,最终被 Cilium 以 Policy denied 丢弃。 再拆开说得更专业一点: 你原来的 NetworkPolicy(kuboard/grafana)是这样: ingress: - from: - podSelector: matchLabels: app.kubernetes.io/name: prometheus ports: - port: 3000 含义是: 只允许 prometheus 的 Pod 去访问 grafana 的 3000 其他来源(包括你从节点直接 curl PodIP)默认拒绝 为什么你加了 K8s NetworkPolicy(ipBlock)也没效果? 因为你的访问路径是“节点/宿主机 → Pod”: 从节点发起的流量到了 Cilium,会以 entity identity 来归类: host(本节点宿主机) remote-node(其他节点过来的 node traffic) 这类流量不是普通 Pod identity,很多情况下也不是单纯按源 IP去匹配 NetworkPolicy 的 ipBlock(尤其在 Cilium 的实现/模式下,优先按 identity 进行策略决策)。 所以你看到的现象就是: 你用 ipBlock: 192.168.1.0/24 放行 —— 依然被 remote-node identity 拒绝 你用 ipBlock: 10.244.0.0/16 放行 —— 依然被 remote-node identity 拒绝 而 cilium monitor 也明确显示拒绝原因是:Policy denied + identity remote-node->... 4)怎么解决的(你最终的正确做法) 你最终用了 CiliumNetworkPolicy: apiVersion: cilium.io/v2 kind: CiliumNetworkPolicy spec: endpointSelector: matchLabels: app.kubernetes.io/name: grafana ingress: - fromEntities: - host - remote-node toPorts: - ports: - port: "3000" protocol: TCP 这条规则的效果是: 明确放行 Cilium 的 entity host:本机宿主机进来的流量 remote-node:其他节点过来的 node 流量 只放行到 grafana pod 的 3000/TCP 所以你立刻在 k8s-01 上 curl PodIP 成功 5)为什么会出现这种情况(更“体系化”的解释) 1)装监控栈/平台时经常自带 NetworkPolicy 很多 kube-prometheus-stack / 平台化套件会默认创建 NetworkPolicy,实现“默认拒绝 + 白名单放行”,比如: Prometheus 可 scrape 其他来源默认不允许访问 UI/端口 这属于“安全默认值”,但会让排障时出现“明明 Pod Running,却访问超时”。 2)Cilium 对 Node/Host 流量有自己的 identity 体系 Cilium 的策略引擎不是只看 IP,而是以 identity/entity 为第一等公民: Pod 有 identity(基于 labels) Node/Host/Remote-node 是 entity 所以要放通 node→pod,最稳的是用 fromEntities: host/remote-node 这也是你最终方案成功的根本原因。 3)“Pod->Pod 通,Node->Pod 不通”是典型特征 因为: Pod→Pod 可能被某些 policy 放行了(或在同 namespace 里满足 selector) Node→Pod 不属于 podSelector 能匹配的对象,常被默认 deny 6)面试:如何把这件事讲成一个漂亮的“排障故事” 下面给你一个可直接背的版本(STAR + 技术细节),你按自己实际环境改两句就能用。 面试复述版本(建议 2~3 分钟) 背景(S) “我们在 K8s 集群里部署监控(Grafana/Prometheus),Grafana Pod Running,但业务方/运维侧从节点访问 Grafana API 一直 i/o timeout,影响监控平台接入和联调。” 任务(T) “我需要快速确认是应用问题、服务暴露问题还是集群网络策略问题,并给出可控的修复方案。” 行动(A) 先排除应用故障:进入 Grafana Pod 内部 curl 127.0.0.1:3000/api/health,确认服务健康、端口正常监听。 定位网络层级:从节点 curl PodIP 超时,同时在同节点跑一个临时 curl Pod 访问 PodIP 也超时,说明不是宿主机网络工具问题,而是集群网络路径被拦。 检查 CNI 与隧道:确认集群使用 Cilium,VXLAN 隧道 8472/UDP 有流量,说明 overlay 本身工作正常。 用可观测手段找“丢包理由”:运行 cilium monitor --type drop,发现对 Grafana:3000 的 SYN 被明确标记为 Policy denied,源身份显示为 remote-node/host。 回查 NetworkPolicy:发现 Grafana 命名空间有默认 NetworkPolicy,只允许 Prometheus Pod 访问 Grafana 3000,节点/宿主机流量不在白名单内,因此被 Cilium 拒绝。 修复策略:使用 CiliumNetworkPolicy 放行 fromEntities: host, remote-node 到 Grafana 的 3000/TCP,修复后节点 curl PodIP 立刻恢复。 结果(R) “Grafana API 访问恢复,监控平台联调继续推进。过程中我把排查步骤固化成 SOP:先应用健康检查→再分层定位→最后用 CNI 可观测工具确认策略/丢包原因,避免盲目改防火墙或路由。” 补充亮点(技术总结) “这类问题的关键点在于:Cilium 以 identity/entity 为核心做策略决策,Node/Host 流量常显示为 remote-node/host,单纯用 K8s NetworkPolicy 的 ipBlock 不一定能匹配到,需要用 CiliumNetworkPolicy 的 fromEntities 精准放行。”你是一个资深的k8s云原生运维工程师 你觉得碰到的这个问题有深度吗? 有,而且深度不在“Grafana 访问不通”这件事本身,而在你最后定位到的点:Cilium(eBPF)语义下,Host/Node 流量与 Pod 流量的身份模型差异,这已经超出“会用 K8s”的层级了。 我用面试官视角给你拆一下它为什么算“有深度”。 为什么这个问题有深度 1) 不是“网络不通”,是“策略语义不一致” 你一开始看到的是 timeout,很容易被带偏去查: VXLAN 8472/UDP、防火墙、路由、MTU、kube-proxy、Service/Endpoint 但最终根因是: NetworkPolicy 只放了 Prometheus 节点访问在 Cilium 里变成 host/remote-node identity 所以被 Policy denied 掉了 这属于 “抽象层(K8s NP) vs 实现层(Cilium identity/entity)”的差异问题,很典型也很难踩得准。 2) 你用的是“正确的高级排障工具链” 真正有经验的人会很快转向: cilium monitor --type drop / hubble 而不是一直“猜”。 你抓到的那句: drop (Policy denied) ... identity remote-node->... tcp SYN 这就是一锤定音的证据链。很多人排障卡住就是因为拿不到“谁丢的、为什么丢”。 3) 这是“集群行为”层面的知识点,不是某个组件 bug 你解决的不是 Grafana,也不是 curl,而是: CNI datapath identity-based enforcement node/host traffic classification NetworkPolicy 与 CiliumNetworkPolicy 的边界 这类问题在生产里经常发生在: metrics/health check 从节点探测 Pod ingress controller / node-local agent 访问业务 Pod 节点上跑的守护进程(daemonset 外部探测)访问 Pod 属于“平台层运维”常见痛点。 面试视角:它值不值得讲? 值得,尤其适合这些岗位: Kubernetes 运维 / 平台工程 / 云原生 SRE 网络/安全/可观测性方向 用 Cilium、Calico、Istio 的团队 但要注意一点: 你讲的重点一定要是“如何定位到 Policy denied + identity”,而不是“我改了很多 yaml”。 这题在“深度等级”大概在哪? 我会给它一个比较客观的分级: 初级:只会说“加个 NetworkPolicy 放行” 中级:知道分层排查(Pod 内 curl、Service、路由) 高级:能用 Cilium/Hubble 看到 Policy denied 并解释 host/remote-node identity 更高级:能进一步讲“为什么 ipBlock 不命中、为什么需要 fromEntities、如何标准化策略/避免误伤” 你这次已经到 高级门槛了。 如果你愿意,我还可以帮你把它再“拔高一档”——补上两句很能镇住面试官的话,比如: “我们在 Cilium 下把 Node->Pod 探测流量单独建了 fromEntities: host/remote-node 的 allowlist,避免跟业务 NP 混在一起。” “排查网络我会先看 datapath drop reason,避免在 overlay、iptables、policy 之间盲猜。” 你想把这题定位成你“最有含金量的一个案例”吗?我可以顺手帮你润色成简历 bullet。

问题 调用 grafana 接口失败 error trying to reach service: dial tcp 10.244.2.60:3000: i/o timeout① 背景(30 秒) “有一次我们在 Kubernetes 集群里部署监控体系(Prometheus + Grafana),Grafana Pod 本身是 Running 的,但从节点或者其他组件访问 Grafana 的 Pod IP 的时候一直超时,curl 会直接 i/o timeout,影响监控平台的接入和联调。” “表面看 Grafana 是正常的,但网络层面存在异常。”② 问题拆解与排查思路(1 分钟) “我当时按分层排查的思路来做,而不是直接改网络策略。” 1️⃣ 先排除应用问题 我先 kubectl exec 进 Grafana Pod,直接 curl 127.0.0.1:3000/api/health 返回 200,说明 Grafana 本身没问题,端口监听正常 👉 这一步可以明确:不是应用、不是容器启动问题。 2️⃣ 再定位是 Service 层还是 Pod 网络问题 我确认 Service / Endpoint 都指向正确的 Pod IP 但从节点直接 curl PodIP 仍然超时 在同节点起一个临时 curl Pod 访问 PodIP,也同样超时 👉 这一步可以确定: 不是宿主机路由问题,而是 Pod 网络路径被拦截。 3️⃣ 确认 CNI 和隧道是否正常 集群使用的是 Cilium + VXLAN 我抓包看到 8472/UDP 的 VXLAN 流量是存在的 说明 overlay 隧道本身工作正常,否则整个集群通信都会异常 👉 到这里,网络连通性问题基本被排除。③ 关键突破点(40 秒) “真正的突破点是在 Cilium 的可观测工具 上。” 我在节点上执行了: cilium monitor --type drop 发现每一次访问 Grafana 3000 端口的 TCP SYN 都被丢弃 丢包原因非常明确:Policy denied 源身份显示为 remote-node 或 host 👉 这一步明确告诉我: 不是网络问题,而是被 Cilium 的策略明确拒绝了。④ 根因分析(30 秒) “回头检查发现,Grafana 所在命名空间里存在默认的 NetworkPolicy,只允许 Prometheus Pod 访问 Grafana 的 3000 端口。” 节点 / 宿主机访问 Grafana 时: 在 Cilium 里会被标记为 host 或 remote-node 并不属于普通 Pod identity 所以即使我用 Kubernetes NetworkPolicy 加了 ipBlock,依然无法命中这类流量 👉 本质原因是: Cilium 是以 identity/entity 为核心做策略决策,而不是单纯基于 IP。⑤ 解决方案(30 秒) “最终我使用的是 CiliumNetworkPolicy,显式放行来自节点的流量。” 在策略里允许: fromEntities: host fromEntities: remote-node 只放行到 Grafana 的 3000/TCP 应用策略后,从节点 curl PodIP 立刻恢复正常。⑥ 总结与价值(20 秒) “这个问题让我印象很深的一点是: 在使用 Cilium 这类 eBPF CNI 时,排查网络问题一定要用它自己的观测工具,而不是只停留在 Kubernetes NetworkPolicy 的抽象层。” “后续我也把这个问题整理成了 SOP,明确: Pod→Pod Node→Pod Service→Pod 在 Cilium 下对应的 identity 和策略差异,避免再踩坑。”面试官常见追问 & 标准答法(加分项) ❓追问 1:为什么 ipBlock 不生效? 答: “因为在 Cilium 里,Node / Host 流量优先按 identity(host / remote-node)匹配策略,而不是单纯按源 IP。 NetworkPolicy 的 ipBlock 在这类流量场景下不会命中,所以需要用 CiliumNetworkPolicy 的 fromEntities。” ❓追问 2:为什么不用 NodePort / LoadBalancer? 答: “NodePort 确实能绕开 Pod 网络策略,但会引入额外暴露面,不符合最小权限原则。 这里的目标只是节点内部访问 Pod 服务,用 CNI 级别策略精确放行更安全、更可控。” ❓追问 3:如何避免以后再发生? 答: 明确区分 Pod 流量 vs Node/Host 流量 在启用 Cilium 的集群里: 默认 NetworkPolicy + 例外的 CiliumNetworkPolicy 遇到网络问题第一时间用 cilium monitor / hubble observe 定位丢包原因,而不是盲改规则 🧠 面试官听到的“隐藏信号” 你这段回答会传递出几个非常强的信号: 你理解 CNI 底层实现,不只是会写 YAML 你会用 可观测工具定位问题 你做的是 体系化排障,而不是拍脑袋改配置 你有 复盘和沉淀能力 如果你愿意,下一步我还能帮你: 把这个案例改成 简历上的一条高质量项目经历 或模拟一次面试官“深挖追问”的对话,帮你把回答打磨到非常顺 你这个案例,放在 K8s 运维 / 平台工程 / SRE 面试里,是妥妥的加分项。root@k8s-01:~# cat > /tmp/cnp-allow-grafana-3000-from-nodes.yaml <<'EOF' apiVersion: cilium.io/v2 kind: CiliumNetworkPolicy metadata: name: allow-grafana-3000-from-nodes namespace: kuboard spec: endpointSelector: matchLabels: app.kubernetes.io/name: grafana ingress: - fromEntities: - host - remote-node toPorts: - ports: - port: "3000" protocol: TCP EOF kubectl apply -f /tmp/cnp-allow-grafana-3000-from-nodes.yaml kubectl -n kuboard get cnp | grep allow-grafana-3000-from-nodes ciliumnetworkpolicy.cilium.io/allow-grafana-3000-from-nodes created allow-grafana-3000-from-nodes 0s True root@k8s-01:~# curl -sS -m 3 http://10.244.2.60:3000/api/health { "database": "ok", "version": "11.2.0", "commit": "2a88694fd3ced0335bf3726cc5d0adc2d1858855" }root@k8s-01:~# 可以了 你现在是一个资深的k8s运维工程师 你帮我详细复盘分析之前的上下文 然后总结根因是什么?怎么解决的?为什么会出现这种情况 然后我想把这个问题也在面试上比如面试官问我 工作当中遇到了什么问题 可以说这个 我是怎么遇到这个问题的 过程尝试了用什么方法解决 然后最后怎么找到问题的 怎么解决的 下面我按“资深 K8s 运维复盘”的方式,把你这次 Grafana Pod IP 不通的问题完整复盘一遍:现象 → 排查路径 → 关键证据 → 根因 → 解决方案 → 为什么会这样。最后再给你一段面试可直接复述的 STAR/故事版本。 1)现象是什么 Pod 里 Grafana 本身是好的: kubectl exec grafana -- curl 127.0.0.1:3000/api/health 返回 OK 日志正常、监听正常(Grafana 启动成功) 但是从 宿主机 / 节点 访问 Pod IP 超时: curl http://10.244.2.60:3000/api/health → i/o timeout 从 同节点的测试 Pod 访问 Pod IP 也超时: 你在 k8s-01 强制调度了 curlimages/curl 去 curl PodIP,仍然 timeout 这说明:不是“宿主机路由不通”,而是“到 Pod 的流量在某处被拦截/丢弃” 2)你做了哪些正确的排查动作(过程复盘) A. 先确认应用是否健康(排除应用问题) 你先在 grafana 容器内 curl 自己: 容器内 OK → Grafana 服务与端口没问题 这一步很关键:把“应用/容器问题”先排掉。 B. 再验证网络路径(区分 host、pod、service) kubectl get svc/ep 确认 endpoint 就是 10.244.2.60:3000 curl PodIP 超时,说明不是 Service 负载均衡层的问题,而是到 endpoint 的流量被拦截 C. 检查 CNI 模式与隧道 你确认了 Cilium 配置: routing-mode: tunnel tunnel-protocol: vxlan 并且抓包能看到 8472/UDP 的 VXLAN 流量 进出(说明隧道本身在工作) 这一点也非常关键:overlay 通道是通的,否则集群内大量 Pod 通信都会异常。 D. 最关键的一步:用 Cilium 观测“丢包原因” 你执行: cilium monitor --type drop 立刻得到核心证据: xx drop (Policy denied) ... identity 6820->29952: 10.244.0.206 -> 10.244.2.60:3000 tcp SYN 以及后面: xx drop (Policy denied) ... identity remote-node->29952: 10.244.0.18 -> 10.244.2.60:3000 tcp SYN 这两条信息极其重要,等价于告诉你: 不是路由问题 不是端口没监听 是 Cilium 的策略(Policy)明确拒绝了 SYN 到这一步,根因已经锁定在 NetworkPolicy / CiliumPolicy 方向。 3)根因是什么(总结一句话版) Grafana 所在命名空间存在默认更严格的 NetworkPolicy(只允许 Prometheus Pod 访问 Grafana:3000),而节点/宿主机流量在 Cilium 里被标记成 remote-node/host 身份,不会被普通 K8s NetworkPolicy 的 ipBlock 命中,最终被 Cilium 以 Policy denied 丢弃。 再拆开说得更专业一点: 你原来的 NetworkPolicy(kuboard/grafana)是这样: ingress: - from: - podSelector: matchLabels: app.kubernetes.io/name: prometheus ports: - port: 3000 含义是: 只允许 prometheus 的 Pod 去访问 grafana 的 3000 其他来源(包括你从节点直接 curl PodIP)默认拒绝 为什么你加了 K8s NetworkPolicy(ipBlock)也没效果? 因为你的访问路径是“节点/宿主机 → Pod”: 从节点发起的流量到了 Cilium,会以 entity identity 来归类: host(本节点宿主机) remote-node(其他节点过来的 node traffic) 这类流量不是普通 Pod identity,很多情况下也不是单纯按源 IP去匹配 NetworkPolicy 的 ipBlock(尤其在 Cilium 的实现/模式下,优先按 identity 进行策略决策)。 所以你看到的现象就是: 你用 ipBlock: 192.168.1.0/24 放行 —— 依然被 remote-node identity 拒绝 你用 ipBlock: 10.244.0.0/16 放行 —— 依然被 remote-node identity 拒绝 而 cilium monitor 也明确显示拒绝原因是:Policy denied + identity remote-node->... 4)怎么解决的(你最终的正确做法) 你最终用了 CiliumNetworkPolicy: apiVersion: cilium.io/v2 kind: CiliumNetworkPolicy spec: endpointSelector: matchLabels: app.kubernetes.io/name: grafana ingress: - fromEntities: - host - remote-node toPorts: - ports: - port: "3000" protocol: TCP 这条规则的效果是: 明确放行 Cilium 的 entity host:本机宿主机进来的流量 remote-node:其他节点过来的 node 流量 只放行到 grafana pod 的 3000/TCP 所以你立刻在 k8s-01 上 curl PodIP 成功 5)为什么会出现这种情况(更“体系化”的解释) 1)装监控栈/平台时经常自带 NetworkPolicy 很多 kube-prometheus-stack / 平台化套件会默认创建 NetworkPolicy,实现“默认拒绝 + 白名单放行”,比如: Prometheus 可 scrape 其他来源默认不允许访问 UI/端口 这属于“安全默认值”,但会让排障时出现“明明 Pod Running,却访问超时”。 2)Cilium 对 Node/Host 流量有自己的 identity 体系 Cilium 的策略引擎不是只看 IP,而是以 identity/entity 为第一等公民: Pod 有 identity(基于 labels) Node/Host/Remote-node 是 entity 所以要放通 node→pod,最稳的是用 fromEntities: host/remote-node 这也是你最终方案成功的根本原因。 3)“Pod->Pod 通,Node->Pod 不通”是典型特征 因为: Pod→Pod 可能被某些 policy 放行了(或在同 namespace 里满足 selector) Node→Pod 不属于 podSelector 能匹配的对象,常被默认 deny 6)面试:如何把这件事讲成一个漂亮的“排障故事” 下面给你一个可直接背的版本(STAR + 技术细节),你按自己实际环境改两句就能用。 面试复述版本(建议 2~3 分钟) 背景(S) “我们在 K8s 集群里部署监控(Grafana/Prometheus),Grafana Pod Running,但业务方/运维侧从节点访问 Grafana API 一直 i/o timeout,影响监控平台接入和联调。” 任务(T) “我需要快速确认是应用问题、服务暴露问题还是集群网络策略问题,并给出可控的修复方案。” 行动(A) 先排除应用故障:进入 Grafana Pod 内部 curl 127.0.0.1:3000/api/health,确认服务健康、端口正常监听。 定位网络层级:从节点 curl PodIP 超时,同时在同节点跑一个临时 curl Pod 访问 PodIP 也超时,说明不是宿主机网络工具问题,而是集群网络路径被拦。 检查 CNI 与隧道:确认集群使用 Cilium,VXLAN 隧道 8472/UDP 有流量,说明 overlay 本身工作正常。 用可观测手段找“丢包理由”:运行 cilium monitor --type drop,发现对 Grafana:3000 的 SYN 被明确标记为 Policy denied,源身份显示为 remote-node/host。 回查 NetworkPolicy:发现 Grafana 命名空间有默认 NetworkPolicy,只允许 Prometheus Pod 访问 Grafana 3000,节点/宿主机流量不在白名单内,因此被 Cilium 拒绝。 修复策略:使用 CiliumNetworkPolicy 放行 fromEntities: host, remote-node 到 Grafana 的 3000/TCP,修复后节点 curl PodIP 立刻恢复。 结果(R) “Grafana API 访问恢复,监控平台联调继续推进。过程中我把排查步骤固化成 SOP:先应用健康检查→再分层定位→最后用 CNI 可观测工具确认策略/丢包原因,避免盲目改防火墙或路由。” 补充亮点(技术总结) “这类问题的关键点在于:Cilium 以 identity/entity 为核心做策略决策,Node/Host 流量常显示为 remote-node/host,单纯用 K8s NetworkPolicy 的 ipBlock 不一定能匹配到,需要用 CiliumNetworkPolicy 的 fromEntities 精准放行。”你是一个资深的k8s云原生运维工程师 你觉得碰到的这个问题有深度吗? 有,而且深度不在“Grafana 访问不通”这件事本身,而在你最后定位到的点:Cilium(eBPF)语义下,Host/Node 流量与 Pod 流量的身份模型差异,这已经超出“会用 K8s”的层级了。 我用面试官视角给你拆一下它为什么算“有深度”。 为什么这个问题有深度 1) 不是“网络不通”,是“策略语义不一致” 你一开始看到的是 timeout,很容易被带偏去查: VXLAN 8472/UDP、防火墙、路由、MTU、kube-proxy、Service/Endpoint 但最终根因是: NetworkPolicy 只放了 Prometheus 节点访问在 Cilium 里变成 host/remote-node identity 所以被 Policy denied 掉了 这属于 “抽象层(K8s NP) vs 实现层(Cilium identity/entity)”的差异问题,很典型也很难踩得准。 2) 你用的是“正确的高级排障工具链” 真正有经验的人会很快转向: cilium monitor --type drop / hubble 而不是一直“猜”。 你抓到的那句: drop (Policy denied) ... identity remote-node->... tcp SYN 这就是一锤定音的证据链。很多人排障卡住就是因为拿不到“谁丢的、为什么丢”。 3) 这是“集群行为”层面的知识点,不是某个组件 bug 你解决的不是 Grafana,也不是 curl,而是: CNI datapath identity-based enforcement node/host traffic classification NetworkPolicy 与 CiliumNetworkPolicy 的边界 这类问题在生产里经常发生在: metrics/health check 从节点探测 Pod ingress controller / node-local agent 访问业务 Pod 节点上跑的守护进程(daemonset 外部探测)访问 Pod 属于“平台层运维”常见痛点。 面试视角:它值不值得讲? 值得,尤其适合这些岗位: Kubernetes 运维 / 平台工程 / 云原生 SRE 网络/安全/可观测性方向 用 Cilium、Calico、Istio 的团队 但要注意一点: 你讲的重点一定要是“如何定位到 Policy denied + identity”,而不是“我改了很多 yaml”。 这题在“深度等级”大概在哪? 我会给它一个比较客观的分级: 初级:只会说“加个 NetworkPolicy 放行” 中级:知道分层排查(Pod 内 curl、Service、路由) 高级:能用 Cilium/Hubble 看到 Policy denied 并解释 host/remote-node identity 更高级:能进一步讲“为什么 ipBlock 不命中、为什么需要 fromEntities、如何标准化策略/避免误伤” 你这次已经到 高级门槛了。 如果你愿意,我还可以帮你把它再“拔高一档”——补上两句很能镇住面试官的话,比如: “我们在 Cilium 下把 Node->Pod 探测流量单独建了 fromEntities: host/remote-node 的 allowlist,避免跟业务 NP 混在一起。” “排查网络我会先看 datapath drop reason,避免在 overlay、iptables、policy 之间盲猜。” 你想把这题定位成你“最有含金量的一个案例”吗?我可以顺手帮你润色成简历 bullet。